drbd與corosync/pacemaker

結合構建高可用mariadb

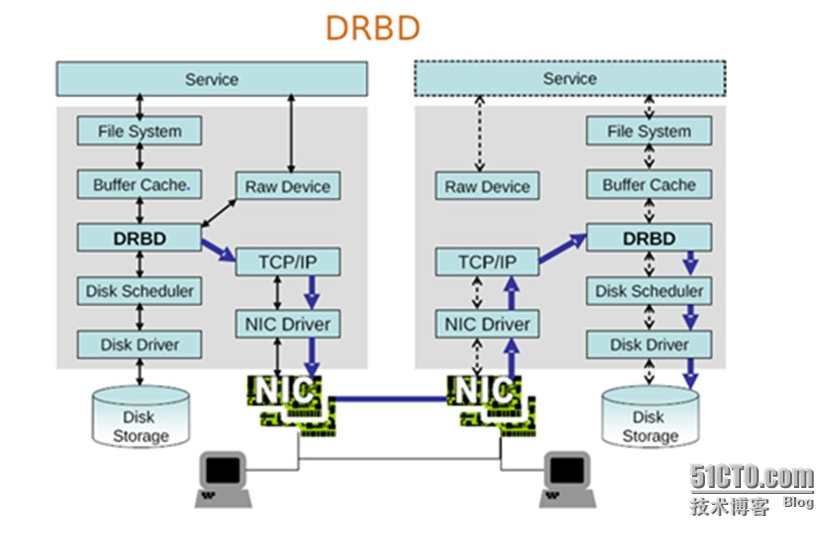

drbd介紹:

高可用節點之間爲了使切換節點時數據一致,不得不使用共享存儲,共享存儲一般只有兩種選擇:NAS 和 SAN。NAS是文件系統級別的共享,性能低下,訪問也受限制,使用時有諸多不變;SAN塊級別共享存儲,但又太貴。當資金不足時,就可以考慮drbd。

drbd是跨主機的塊設備鏡像系統,一主一從(兩個主機只能有一個能進行寫操作,slave主機只能接受master主機傳過來的數據)。drbd是工作於內核中的,工作時,在內核內存的buffer cache與disk scheduler之間增加一個全透明無影響的數據抄送備份過程,複製的數據備份通過tcp/ip協議傳送到互爲鏡像的從節點上,從而實現數據備份功能,提供了數據的冗餘能力,但可靠性有待考慮,偶爾會抽風,損失數據——腦分裂,兩個主機都能寫,使數據文件系統錯亂、數據丟失。因此,如果要使用則用在高可用中,且提供stonith設備,以保證只能有一主。

工作模式與netfilter相似,提供了某種功能的模塊,但不一定需要工作,只有通過用戶空間管理工具(drbdadm)定義了規則發送往內核後纔會工作。

架構圖:

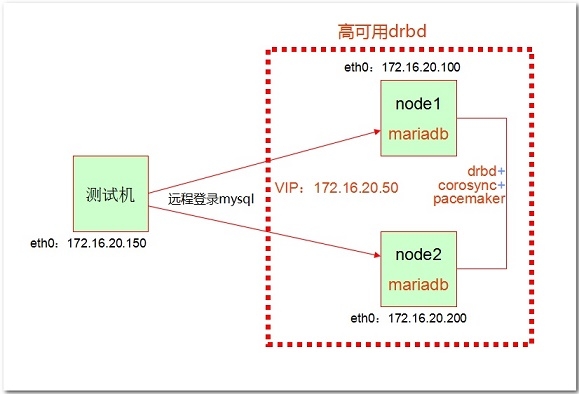

實驗流程:

1、準備2臺虛擬機做節點,做好時間同步、基於主機名訪問、ssh互信的準備工作,IP地址如架構圖所示配置

2、提供新分區(試驗中是/dev/sda3,可以兩節點的主次設備號不一樣,但大小得一樣),無需格式化

3、做drbd主從

4、安裝mariadb提供存儲服務

5、安裝corosync和pacemaker,並提供CLI:crmsh

6、定義資源,開啓高可用drbd存儲服務

試驗環境:

三臺虛擬機

內核:2.6.32-504.el6.x86_64

發行版:CentOS-6.6-x86_64

無stonith設備

一、配置drbd

1、配置前提:

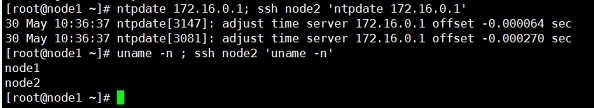

時間同步、基於主機名訪問、ssh互信

兩個節點主機都需要做如下操作:

① 時間同步:

# ntpdate 172.16.0.1 31 May 19:54:06 ntpdate[51867]: step time server 172.16.0.1 offset 304.909926 sec # crontab -e */3 * * * * /usr/sbin/ntpdate 172.16.0.1 &> /dev/null

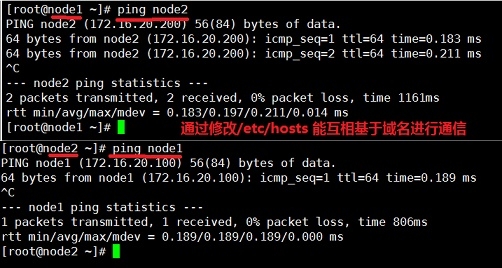

② 基於主機名訪問

# vim /etc/hosts 127.0.0.1 localhost.localdomain localhost.localdomain localhost4 localhost4.localdomain4 localhost ::1 localhost.localdomain localhost.localdomain localhost6 localhost6.localdomain6 localhost 172.16.0.1 server.magelinux.com server 172.16.20.100 node1 172.16.20.200 node2

③ 建立各節點之間的root用戶能夠基於ssh密鑰認證通信;

# ssh-keygen -t rsa -P '' # ssh-copy-id -i /root/.ssh/id_rsa.pub [email protected] # ssh node1 'ifconfig'

2、提供磁盤分區: (兩個節點都需要同時提供相同大小的存儲分區)

# fdisk /dev/sda

提供一個5G的新分區,主分區或者擴展分區都行,此次是 /dev/sda3 +5G

讀取新分區:

# partx -a /dev/sda

注:提供分區後不需要格式化分區

3、程序包選擇及安裝:

說明:

① 內核空間主程序包:kmod-drbd84-8.4.5-504.1.el6.x86_64.rpm 僅生成一個內核模塊,其他的都是說明文件,沒有配置文件

release版本號,必須與內核的發行版本號一致(# uname -r查看),向內核打補丁特別嚴格!

# uname -r 2.6.32-504.el6.x86_64

② 用戶空間程序包: 選擇更多,不是特別嚴格,至少主板本號和次版本號要一致

drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm

兩個節點都安裝兩個程序包:

lftp 172.16.0.1:/pub/Sources/6.x86_64/drbd> get kmod-drbd84-8.4.5-504.1.el6.x86_64.rpm drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm

# rpm -ivh kmod-drbd84-8.4.5-504.1.el6.x86_64.rpm drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm warning: drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm: Header V4 DSA/SHA1 Signature, key ID baadae52: NOKEY Preparing... ########################################### [100%] 1:drbd84-utils ########################################### [ 50%] 2:kmod-drbd84 ########################################### [100%] Working. This may take some time ... Done.

(沒有做安全校驗的key,可以安全忽略)

4、設置drbd配置文件

生成隨機字符串以便下面文件中drbd網絡通信安全認證中使用

# openssl rand -base64 16 5KY86Kw3TzZ4kHbZkrP8Hw==

修改配置文件

# vim /etc/drbd.d/global_common.conf

global {

usage-count no;

}

common {

handlers {

}

startup {

}

options {

}

disk {

on-io-error detach;

}

net {

cram-hmac-alg "sha1";

shared-secret "5KY86Kw3TzZ4kHbZkrP8Hw";

}

syncer {

rate 500M;

}

}5、定義資源:

增加文件,定義存儲資源文件

# vim /etc/drbd.d/mystore.res

resource mystore {

device /dev/drbd0;

disk /dev/sda3;

meta-disk internal;

on node1 {

address 172.16.20.100:7789;

}

on node2 {

address 172.16.20.200:7789;

}爲了保持兩個節點的配置是一樣的,這裏採用基於ssh的scp複製的方式,將配置文件同步至node2:

# scp -r /etc/drbd.* node2:/etc/ drbd.conf 100% 133 0.1KB/s 00:00 global_common.conf 100% 2105 2.1KB/s 00:00 mystore.res 100% 171 0.2KB/s 00:00

3、在兩個節點上初始化已定義的資源res並啓動服務:

1)初始化資源,在node1和node2上分別執行:

# drbdadm create-md mystore initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created.

2)啓動服務,在Node1和Node2上分別執行:

[root@node1 ~]# service drbd start Starting DRBD resources: [ create res: mystore prepare disk: mystore adjust disk: mystore adjust net: mystore ] ......... [root@node1 ~]# [root@node2 ~]# drbdadm create-md mystore initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created. [root@node2 ~]# service drbd start Starting DRBD resources: [ create res: mystore prepare disk: mystore adjust disk: mystore adjust net: mystore ] . [root@node2 ~]#

3)查看啓動狀態並設置主節點:

# cat /proc/drbd version: 8.4.5 (api:1/proto:86-101) GIT-hash: 1d360bde0e095d495786eaeb2a1ac76888e4db96 build by [email protected], 2015-01-02 12:06:20 0: cs:Connected ro:Secondary/Secondary ds:Inconsistent/Inconsistent C r----- ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:5252056

注: Secondary Inconsistent表示兩個都是從節點,磁盤塊還沒有按位對齊,不同步。

也可以使用drbd-overview命令來查看:

# drbd-overview 0:mystore/0 Connected Secondary/Secondary Inconsistent/Inconsistent

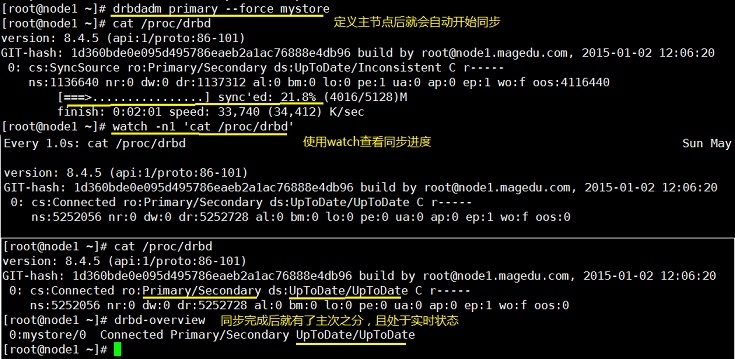

從上面的信息中可以看出此時兩個節點均處於Secondary狀態。於是,我們接下來需要將其中一個節點設置爲Primary。在要設置爲Primary的節點上執行如下命令:

# drbdadm primary --force mystore

第一次創建主節點都需要“--force”選項,否則報如下錯誤:

0: State change failed: (-2) Need access to UpToDate data

Command 'drbdsetup-84 primary 0' terminated with exit code 17

注: 也可以在要設置爲Primary的節點上使用如下命令來設置主節點:

# drbdadm -- --overwrite-data-of-peer primary mystore

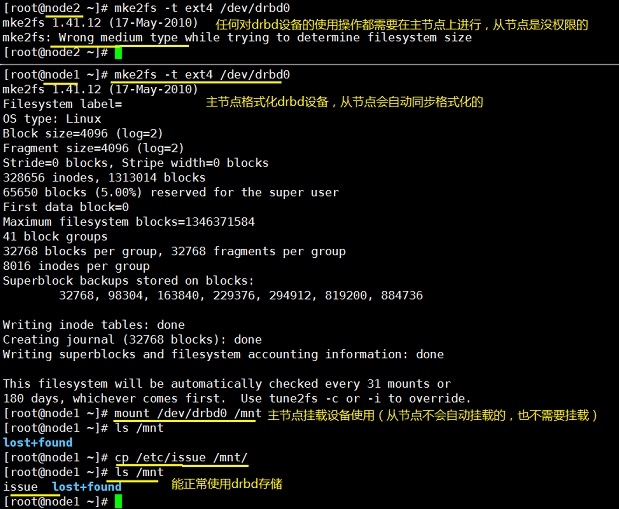

4)格式化掛載使用並驗證同步情況

# mke2fs -t ext4 /dev/drbd0 # mount /dev/drbd0 /mnt

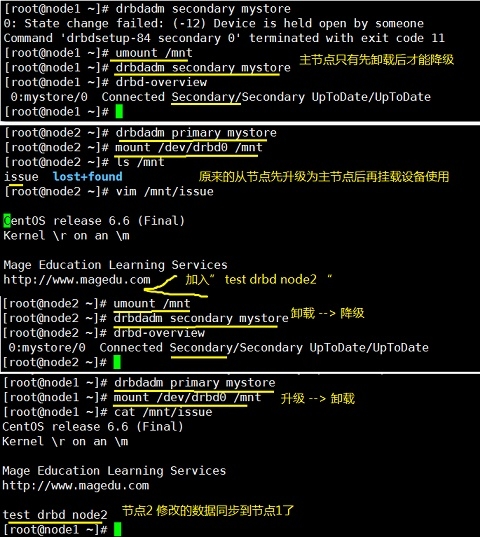

5)主從節點轉換

切換Primary和Secondary節點

對主Primary/Secondary模型的drbd服務來講,在某個時刻只能有一個節點爲Primary,因此,要切換兩個節點的角色,只能在先將原有的Primary節點設置爲Secondary後,才能原來的Secondary節點設置爲Primary:

主節點卸載/dev/drbd# 設備 --> 主節點對自己降級 --> 降級成功後,從節點升級自己爲主節點 --> 新的主節點掛載/dev/drbd# 設備使用

[root@node2 ~]# sync [root@node2 ~]# umount /mnt [root@node2 ~]# drbdadm secondary mystore [root@node2 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate [root@node1 ~]# drbdadm primary mystore [root@node1 ~]# mount /dev/drbd0 /mnt/ [root@node1 ~]# ls /mnt issue lost+found [root@node1 ~]# touch /mnt/node1.txt [root@node1 ~]# ls /mnt issue lost+found node1.txt [root@node1 ~]# umount /mnt [root@node1 ~]# drbdadm secondary mystore [root@node1 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate

二、提供mariadb服務程序

1、創建專用用戶:

# groupadd -r -g 27 mysql # useradd -r -u 27 -g 27 mysql # id mysql uid=27(mysql) gid=27(mysql) groups=27(mysql)

兩個節點都需要創建,且創建的mysql用戶的uid、gid號,mysql組的id號都得一致。

2、安裝mariadb二進制程序

程序包:mariadb-5.5.43-linux-x86_64.tar.gz

先在兩個節點主機上都創建專門的drbd0的掛載點:

# mkdir /mydata

將其中一個調整爲主節點,另一個調整爲從節點:

[root@node1 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate [root@node1 ~]# drbdadm primary mystore [root@node1 ~]# drbd-overview 0:mystore/0 Connected Primary/Secondary UpToDate/UpToDate

在主節點上進行mariadb安裝及數據初始化:

[root@node1 ~]# tar xf mariadb-5.5.43-linux-x86_64.tar.gz -C /usr/local/ [root@node1 ~]# ln -sv /usr/local/mariadb-5.5.43-linux-x86_64/ /usr/local/mysql `/usr/local/mysql' -> `/usr/local/mariadb-5.5.43-linux-x86_64/' [root@node1 ~]# cd /usr/local/mysql [root@node1 mysql]# ls bin COPYING.LESSER EXCEPTIONS-CLIENT INSTALL-BINARY man README share support-files COPYING data include lib mysql-test scripts sql-bench [root@node1 mysql]# [root@node1 mysql]# ll total 220 …… drwxr-xr-x 4 root root 4096 Jun 1 20:22 sql-bench drwxr-xr-x 3 root root 4096 Jun 1 20:22 support-files [root@node1 mysql]# chown -R root:mysql ./* [root@node1 mysql]# mkdir /mydata/data [root@node1 mysql]# chown -R mysql:mysql /mydata/data [root@node1 mysql]# ll /mydata total 24 drwxr-xr-x 2 mysql mysql 4096 Jun 1 20:31 data -rw-r--r-- 1 root root 103 Jun 1 20:02 issue drwx------ 2 root root 16384 Jun 1 20:01 lost+found -rw-r--r-- 1 root root 0 Jun 1 20:05 node1.txt [root@node1 mysql]# ./scripts/mysql_install_db --user=mysql --datadir=/mydata/data [root@node1 mysql]# ls /mydata/data aria_log.00000001 aria_log_control mysql performance_schema test

初始化mariadb後提供相關的配置服務:

[root@node1 mysql]# cp support-files/mysql.server /etc/rc.d/init.d/mysqld [root@node1 mysql]# chkconfig --add mysqld [root@node1 mysql]# chkconfig mysqld off [root@node1 mysql]# chkconfig | grep mysqld mysqld 0:off1:off2:off3:off4:off5:off6:off [root@node1 mysql]# mkdir /etc/mysql [root@node1 mysql]# cp support-files/my-large.cnf /etc/mysql/my.cnf [root@node1 mysql]# vim /etc/mysql/my.cnf 添加下面三項: datadir = /mydata/data innodb_file_per_table = on skip_name_resolve = on

驗證主節點上的mariadb服務是否配置成功:

[root@node1 mysql]# service mysqld start Starting MySQL... [ OK ] [root@node1 mysql]# /usr/local/mysql/bin/mysql MariaDB [(none)]> GRANT ALL ON *.* TO "root"@"172.16.20.%" IDENTIFIED BY "123"; Query OK, 0 rows affected (0.01 sec) MariaDB [(none)]> FLUSH PRIVILEGES; Query OK, 0 rows affected (0.00 sec) MariaDB [(none)]> \q Bye

將主節點node1降爲從節點,從節點node2升爲主節點,以便在node2上配置好mariadb服務程序(無需執行數據庫初始化):

[root@node1 mysql]# service mysqld stop Shutting down MySQL. [ OK ] [root@node1 mysql]# umount /mydata [root@node1 mysql]# drbdadm secondary mystore [root@node1 mysql]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate [root@node1 mysql]# [root@node2 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate [root@node2 ~]# drbdadm primary mystore [root@node2 ~]# drbd-overview 0:mystore/0 Connected Primary/Secondary UpToDate/UpToDate [root@node2 ~]# mount /dev/drbd0 /mydata [root@node2 ~]# ls /mydata data issue lost+found node1.txt [root@node2 ~]# ll /mydata total 24 drwxr-xr-x 6 mysql mysql 4096 Jun 1 21:40 data -rw-r--r-- 1 root root 103 Jun 1 20:02 issue drwx------ 2 root root 16384 Jun 1 20:01 lost+found -rw-r--r-- 1 root root 0 Jun 1 20:05 node1.txt [root@node2 ~]# ll /mydata/data/ total 28720 -rw-rw---- 1 mysql mysql 16384 Jun 1 21:40 aria_log.00000001 -rw-rw---- 1 mysql mysql 52 Jun 1 21:40 aria_log_control -rw-rw---- 1 mysql mysql 18874368 Jun 1 21:40 ibdata1 -rw-rw---- 1 mysql mysql 5242880 Jun 1 21:40 ib_logfile0 …… [root@node2 ~]# tar xf mariadb-5.5.43-linux-x86_64.tar.gz -C /usr/local/ [root@node2 ~]# ln -sv /usr/local/mariadb-5.5.43-linux-x86_64/ /usr/local/mysql `/usr/local/mysql' -> `/usr/local/mariadb-5.5.43-linux-x86_64/' [root@node2 ~]# mkdir /etc/mysql [root@node1 mysql]# scp /etc/mysql/my.cnf node2:/etc/mysql/ my.cnf 100% 4974 4.9KB/s 00:00 [root@node1 mysql]# scp /etc/rc.d/init.d/mysqld node2:/etc/rc.d/init.d/ mysqld 100% 12KB 11.9KB/s 00:00

驗證node2是否能正常使用:

[root@node2 ~]# service mysqld start Starting MySQL... [ OK ] [root@node2 ~]# /usr/local/mysql/bin/mysql MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | testdb | +--------------------+ 5 rows in set (0.06 sec) MariaDB [(none)]> create database test2db; Query OK, 1 row affected (0.01 sec) MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | test2db | | testdb | +--------------------+ 6 rows in set (0.00 sec) MariaDB [(none)]> \q Bye [root@node2 ~]#

節點2也能正常使用。至此,mariadb服務提供完畢。

(如果安裝mariadb時出現了drbd0出現分裂,出現/dev/drbd0~drbd15時,可以先service drbd stop 停止服務,再刪除磁盤分區,再重新創建分區,然後重新 ”# drbdadm primary --force mystore“ 初始化同步drbd0。同步完後就可以重新初始化mariadb了。

以後使用時,必須先提升爲primary後才能再掛載drbd0使用。

!!要換掛載點時,必須先umount後在降級,然後在另一個節點先升級爲primary後再掛載!!

!!總之,無論如何都必須達到掛載時都是主機處於primary狀態!!)

三、 安裝corosync和pacemaker

1、兩個節點都需要安裝主程序包

主程序包:

corosync-1.4.7-1.el6.x86_64

pacemaker-1.1.12-4.el6.x86_64

# yum install -y corosync pacemaker …… Installed: corosync.x86_64 0:1.4.7-1.el6 pacemaker.x86_64 0:1.1.12-4.el6 Dependency Installed: clusterlib.x86_64 0:3.0.12.1-68.el6 corosynclib.x86_64 0:1.4.7-1.el6 libibverbs.x86_64 0:1.1.8-3.el6 libqb.x86_64 0:0.16.0-2.el6 librdmacm.x86_64 0:1.0.18.1-1.el6 lm_sensors-libs.x86_64 0:3.1.1-17.el6 net-snmp-libs.x86_64 1:5.5-49.el6_5.3 pacemaker-cli.x86_64 0:1.1.12-4.el6 pacemaker-cluster-libs.x86_64 0:1.1.12-4.el6 pacemaker-libs.x86_64 0:1.1.12-4.el6 perl-TimeDate.noarch 1:1.16-13.el6 resource-agents.x86_64 0:3.9.5-12.el6 Complete!

2、提供配置文件:

# cp /etc/corosync/corosync.conf.example /etc/corosync/corosync.conf

# vim /etc/corosync/corosync.conf

內容修改爲如下內容:

compatibility: whitetank

totem {

version: 2

secauth: on

threads: 0

interface {

ringnumber: 0

bindnetaddr: 172.16.0.0

mcastaddr: 239.255.11.11

mcastport: 5405

ttl: 1

}

}

logging {

fileline: off

to_stderr: no

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

debug: off

timestamp: on

logger_subsys {

subsys: AMF

debug: off

}

}

service {

ver: 0

name: pacemaker

use_mgmtd: yes

}

aisexec {

user: root

group: root

}service段定義pacemaker爲corosync插件模式工作

3、各種檢驗

驗證網卡是否打開多播功能:

# ip link show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:10:05:bf brd ff:ff:ff:ff:ff:ff

(# ip addr 也能查看)

如果沒有需要使用如下命令打開:

# ip link set eth0 multicast on

生成corosync的密鑰文件:

# corosync-keygen

如果熵池中的隨機數不夠,可以通過從網絡上下載打文件或手動敲擊鍵盤生成。

將生成的密鑰文件和corosync配置文件保留所有屬性複製一份到node2節點,並確保屬性符合要求:

# scp -p /etc/corosync/{corosync.conf,authkey} node2:/etc/corosync/

corosync.conf 100% 2757 2.7KB/s 00:00

authkey 100% 128 0.1KB/s 00:00

[root@node2 ~]# ll /etc/corosync/authkey

-r-------- 1 root root 128 May 30 11:56 authkey (密鑰文件必須爲400或600,不是的話須手動chmod修改)

啓動兩個節點的corosync:

[root@node1 corosync]# service corosync start ; ssh node2 'service corosync start' Starting Corosync Cluster Engine (corosync): [ OK ] Starting Corosync Cluster Engine (corosync): [ OK ]

驗證corosync引擎是否正常啓動:

[root@node1 corosync]# ss -unlp | grep corosync

UNCONN 0 0 172.16.20.100:5404 *:* users:(("corosync",14270,13))

UNCONN 0 0 172.16.20.100:5405 *:* users:(("corosync",14270,14))

UNCONN 0 0 239.255.11.11:5405 *:* users:(("corosync",14270,10))

[root@node1 corosync]# grep -e "Corosync Cluster Engine" -e "configuration file" /var/log/cluster/corosync.log

Jun 01 22:15:57 corosync [MAIN ] Corosync Cluster Engine ('1.4.7'): started and ready to provide service.

Jun 01 22:15:57 corosync [MAIN ] Successfully read main configuration file '/etc/corosync/corosync.conf'.

查看初始化成員節點通知是否正常發出:

[root@node1 corosync]# grep TOTEM /var/log/cluster/corosync.log Jun 01 22:15:57 corosync [TOTEM ] Initializing transport (UDP/IP Multicast). Jun 01 22:15:57 corosync [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0). Jun 01 22:15:57 corosync [TOTEM ] The network interface [172.16.20.100] is now up. Jun 01 22:15:57 corosync [TOTEM ] A processor joined or left the membership and a new membership was formed. Jun 01 22:15:57 corosync [TOTEM ] A processor joined or left the membership and a new membership was formed.

檢查啓動過程中是否有錯誤產生,下面的錯誤信息表示pacemaker不久之後將不再作爲corosync的插件運行,因此,建議使用cman作爲集羣基礎架構服務,此處可安全忽略:

[root@node1 corosync]# grep ERROR: /var/log/cluster/corosync.log | grep -v unpack_resources Jun 01 22:15:57 corosync [pcmk ] ERROR: process_ais_conf: You have configured a cluster using the Pacemaker plugin for Corosync. The plugin is not supported in this environment and will be removed very soon. Jun 01 22:15:57 corosync [pcmk ] ERROR: process_ais_conf: Please see Chapter 8 of 'Clusters from Scratch' (http://www.clusterlabs.org/doc) for details on using Pacemaker with CMAN Jun 01 22:15:58 corosync [pcmk ] ERROR: pcmk_wait_dispatch: Child process mgmtd exited (pid=14282, rc=100)

說明:

第一和第二個error可以安全忽略;

對於第三個error,仔細看了/var/log/messages日誌,或者使用crm_verify -L檢查一下錯誤,其實沒必要卸載重裝。這個錯誤是由於缺少snoith設備引起的,並不會影響corosync的運行。可以安全忽略。

查看pacemaker是否正常啓動:

[root@node1 corosync]# grep pcmk_startup /var/log/cluster/corosync.log Jun 01 22:15:57 corosync [pcmk ] info: pcmk_startup: CRM: Initialized Jun 01 22:15:57 corosync [pcmk ] Logging: Initialized pcmk_startup Jun 01 22:15:57 corosync [pcmk ] info: pcmk_startup: Maximum core file size is: 18446744073709551615 Jun 01 22:15:57 corosync [pcmk ] info: pcmk_startup: Service: 9 Jun 01 22:15:57 corosync [pcmk ] info: pcmk_startup: Local hostname: node1

上面檢查情況都是正常或可安全忽略的,在node2上的也執行同樣命令查看檢查狀況是否正常。

4、安裝命令行客戶端程序crmsh及其依賴的pssh包:

從ftp://172.16.0.1下載程序包 :

lftp 172.16.0.1:/pub/Sources/6.x86_64/crmsh> get pssh-2.3.1-2.el6.x86_64.rpm

lftp 172.16.0.1:/pub/Sources/6.x86_64/corosync> get crmsh-2.1-1.6.x86_64.rpm

crmsh-2.1-1.6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm

一般都只需要在一個節點上安裝crmsh即可,但爲了使用方便,可以在兩個節點上都安裝crmsh

# yum install --nogpgcheck -y crmsh-2.1-1.6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm Installed: crmsh.x86_64 0:2.1-1.6 pssh.x86_64 0:2.3.1-2.el6 Dependency Installed: python-dateutil.noarch 0:1.4.1-6.el6 python-lxml.x86_64 0:2.2.3-1.1.el6 Complete!

安裝完後可以查看節點信息並使用了:

此時0個資源、2個節點

# crm status Last updated: Mon Jun 1 22:49:35 2015 Last change: Mon Jun 1 22:16:07 2015 Stack: classic openais (with plugin) Current DC: node2 - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 0 Resources configured Online: [ node1 node2 ] [root@node1 ~]#

四、配置資源,啓動高可用

1、配置資源前準備

兩個節點都停止資源的服務

# chkconfig drbd off # chkconfig | grep drbd drbd 0:off1:off2:off3:off4:off5:off6:off [root@node2 ~]# service mysqld stop Shutting down MySQL. [ OK ] [root@node2 ~]# umount /mydata [root@node2 ~]# drbdadm secondary mystore [root@node2 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate

2、配置全局屬性參數:

在本次試驗中,有兩個參數是必須得有的:

① stonith-enabled=false :因爲我們這沒有使用stonith設備,沒有這個選項是會報嚴重錯誤的;

② no-quorum-policy=ignore : 這是防止兩節點中其中一個節點處於offline時另一個節點因爲沒有法定票數(without quorum)而不會自動啓動資源服務。

[root@node1 ~]# crm crm(live)# configure show node node1 node node2 property cib-bootstrap-options: \ dc-version=1.1.11-97629de \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes=2 crm(live)# configure crm(live)configure# property stonith-enabled=false crm(live)configure# property no-quorum-policy=ignore crm(live)configure# verify crm(live)configure# commit crm(live)configure# show node node1 node node2 property cib-bootstrap-options: \ dc-version=1.1.11-97629de \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes=2 \ stonith-enabled=false \ no-quorum-policy=ignore crm(live)configure#

3、配置drbd資源

無論定義一個master/slave資源還是clone資源,都必須先是primitive。即先定義成primitive資源,再將次primitive資源克隆成clone資源或master/slave資源。

本次需要定義的資源有4個:

① IP: 172.16.20.50

② mariadb服務程序

③ drbd主從

④ Filesystem的掛載

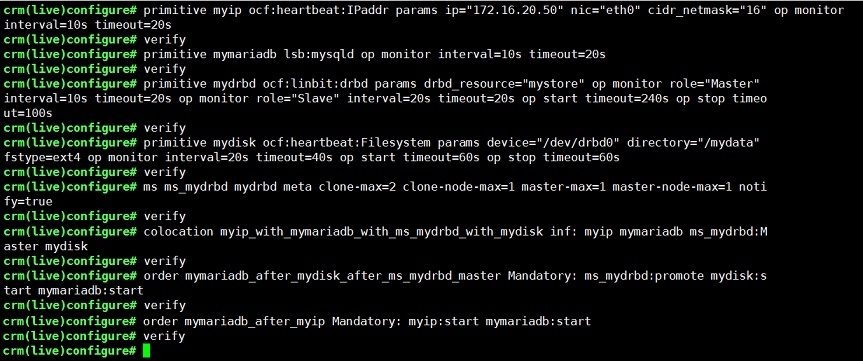

定義4個主資源及一個master/slave類型,每定義一個資源就用verify校驗以便及時修改:

crm(live)configure# primitive myip ocf:heartbeat:IPaddr params ip="172.16.20.50" nic="eth0" cidr_netmask="16" op monitor interval=10s timeout=20s crm(live)configure# verify crm(live)configure# primitive mymariadb lsb:mysqld op monitor interval=10s timeout=20s crm(live)configure# verify crm(live)configure# primitive mydrbd ocf:linbit:drbd params drbd_resource="mystore" op monitor role="Master" interval=10s timeout=20s op monitor role="Slave" interval=20s timeout=20s op start timeout=240s op stop timeout=100s crm(live)configure# verify crm(live)configure# primitive mydisk ocf:heartbeat:Filesystem params device="/dev/drbd0" directory="/mydata" fstype=ext4 op monitor interval=20s timeout=40s op start timeout=60s op stop timeout=60s crm(live)configure# verify crm(live)configure# ms ms_mydrbd mydrbd meta clone-max=2 clone-node-max=1 master-max=1 master-node-max=1 notify=true crm(live)configure# verify

定義資源的排列約束(資源的互相之間應該在哪啓動):

crm(live)configure# colocation myip_with_mymariadb_with_ms_mydrbd_with_mydisk inf: myip mymariadb ms_mydrbd:Master mydisk crm(live)configure# verify

定義資源的順序約束(也即啓動順序——從左往右依次進行):

crm(live)configure# order mymariadb_after_mydisk_after_ms_mydrbd_master Mandatory: ms_mydrbd:promote mydisk:start mymariadb:start crm(live)configure# verify crm(live)configure# order mymariadb_after_myip Mandatory: myip:start mymariadb:start crm(live)configure# verify crm(live)configure# commit

最終的資源配置如下:

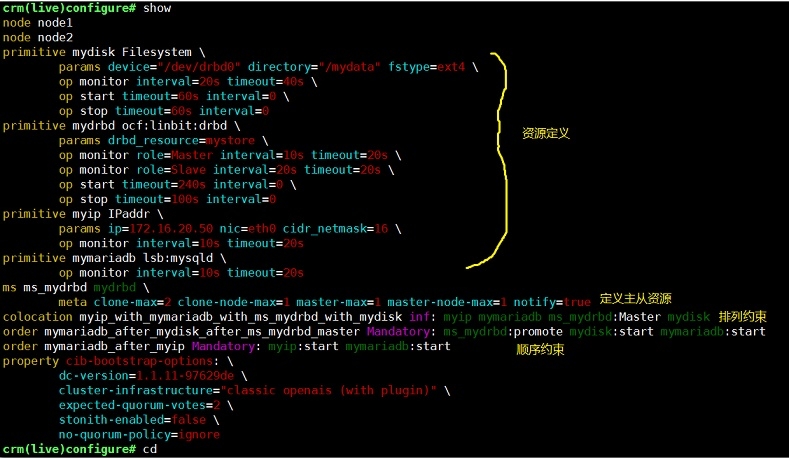

crm(live)configure# show node node1 node node2 primitive mydisk Filesystem \ params device="/dev/drbd0" directory="/mydata" fstype=ext4 \ op monitor interval=20s timeout=40s \ op start timeout=60s interval=0 \ op stop timeout=60s interval=0 primitive mydrbd ocf:linbit:drbd \ params drbd_resource=mystore \ op monitor role=Master interval=10s timeout=20s \ op monitor role=Slave interval=20s timeout=20s \ op start timeout=240s interval=0 \ op stop timeout=100s interval=0 primitive myip IPaddr \ params ip=172.16.20.50 nic=eth0 cidr_netmask=16 \ op monitor interval=10s timeout=20s primitive mymariadb lsb:mysqld \ op monitor interval=10s timeout=20s ms ms_mydrbd mydrbd \ meta clone-max=2 clone-node-max=1 master-max=1 master-node-max=1 notify=true colocation myip_with_mymariadb_with_ms_mydrbd_with_mydisk inf: myip mymariadb ms_mydrbd:Master mydisk order mymariadb_after_mydisk_after_ms_mydrbd_master Mandatory: ms_mydrbd:promote mydisk:start mymariadb:start order mymariadb_after_myip Mandatory: myip:start mymariadb:start property cib-bootstrap-options: \ dc-version=1.1.11-97629de \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes=2 \ stonith-enabled=false \ no-quorum-policy=ignore crm(live)configure# cd

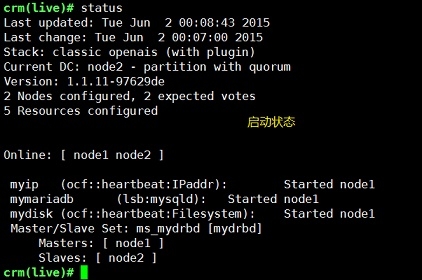

上面commit後資源就開始運作了,可以看到,總共有2個節點、5個資源,現在node1是master,所有資源都運行在node1上,

crm(live)# status Last updated: Tue Jun 2 00:08:43 2015 Last change: Tue Jun 2 00:07:00 2015 Stack: classic openais (with plugin) Current DC: node2 - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 5 Resources configured Online: [ node1 node2 ] myip(ocf::heartbeat:IPaddr):Started node1 mymariadb(lsb:mysqld):Started node1 mydisk(ocf::heartbeat:Filesystem):Started node1 Master/Slave Set: ms_mydrbd [mydrbd] Masters: [ node1 ] Slaves: [ node2 ] crm(live)#

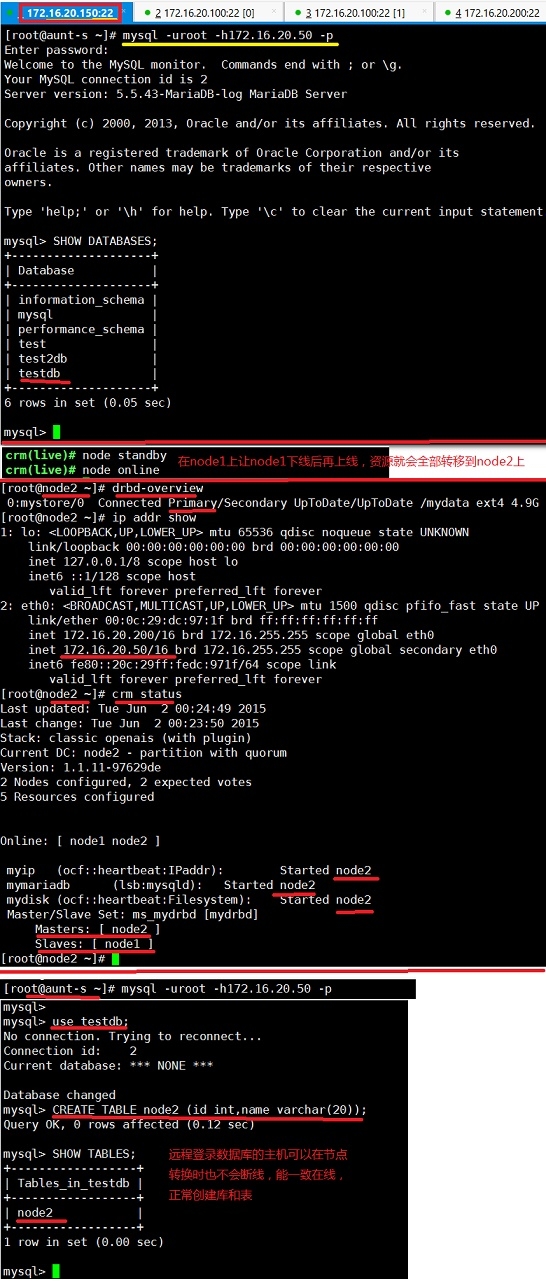

4、配置後的驗證與使用

drbd與corosync/pacemaker 結合構建高可用mariadb完成。