一、DRBD簡介

DRBD全稱Distributed Replicated Block (分佈式的複製塊設備),開源項目。它是一款基於塊設備的文件複製解決方案,速度比文件級別的軟件如NFS,samba快很多,而且不會出現單點故障,是很多中小企業的共享存儲首選解決方案。

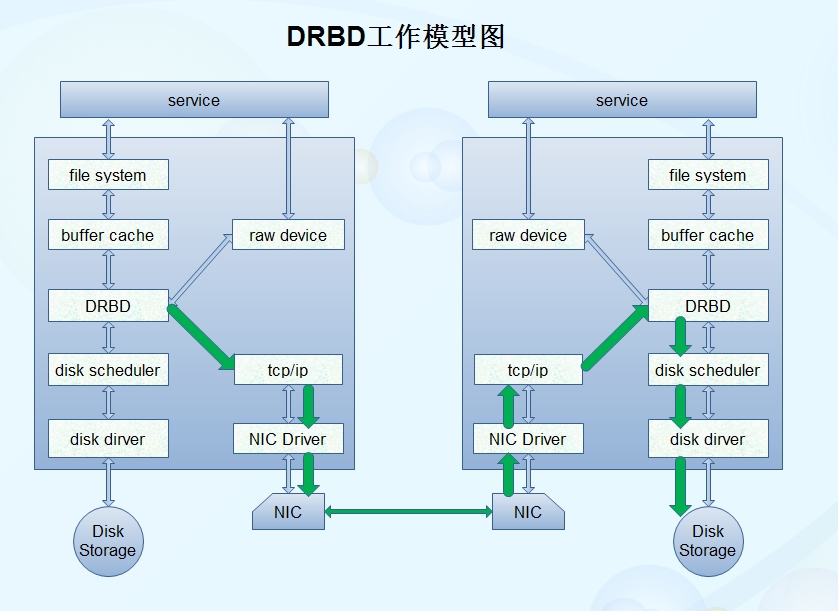

二、DRBD的工作模式

從上圖中我們可以清楚的看到DRBD是工作在內核中,將協議建立在buffer cache(內核緩存)與Disk scheduler(磁盤調度器)之間,將上下文傳輸的二進制數據複製一份,通過tcp/ip協議封裝後由網卡發送至另一臺DRBD節點上進行數據同步的。

DRBD可以工作在主備(一個節點運行,另一個節點備份)模式,也可以工作在雙主(上個節點同時運行)模式,在雙主模式下要求必須建立在高可用集羣的基礎上工作。實現DRBD的必要條件是多個節點上必須準備相同大小相同名稱的磁盤或分區。

三、搭建DRBD主備模型

1.準備環境

1).系統centos6.6;內核2.6.32-504.el6.x86_64

2).兩個節點

node1.wuhf.com:172.16.13.13

node2.wuhf.com:172.16.13.14

3).兩個磁盤分區

/dev/sda5 大小512M

4).時間同步

ntpdate -u 172.16.0.1

crontab -e

*/3 * * * * /usr/sbin/ntpdate 172.16.0.1 &> /dev/null

5).基於密鑰互訪

ssh-keygen -t rsa -f /root/.ssh/id_rsa -P ''

ssh-copy-id -i /root/.ssh/id_rsa.pub root@IP

2.安裝程序包

對應自己系統內核版本的軟件包,下載至本地目錄:

drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm;

kmod-drbd84-8.4.5-504.1.el6.x86_64.rpm;

rpm -ivh drbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm kmod-drbd84-8.4.5-504.1.el6.x86_64.rpm

3.配置文件

drbd的主配置文件爲/etc/drbd.conf;爲了管理的便捷性,目前通常會將些配置文件分成多個部分,且都保存至/etc/drbd.d/目錄中,主配置文件中僅使用"include"指令將這些配置文件片斷整合起來。通常,/etc/drbd.d目錄中的配置文件爲global_common.conf和所有以.res結尾的文件。其中global_common.conf中主要定義global段和common段,而每一個.res的文件用於定義一個資源。

vim /etc/drbd.d/global-common.conf

global {

usage-count no; //關掉在線幫助

# minor-count dialog-refresh disable-ip-verification

}

common {

protocol C; //設置同步傳輸

handlers {

pri-on-incon-degr "/usr/lib/drbd/notify-pri-on-incon-degr.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

pri-lost-after-sb "/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

local-io-error "/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f";

# fence-peer "/usr/lib/drbd/crm-fence-peer.sh";

# split-brain "/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

#wfc-timeout 120;

#degr-wfc-timeout 120;

}

disk {

on-io-error detach; //磁盤錯誤處理機制

#fencing resource-only;

}

net {

cram-hmac-alg "sha1"; //數據加密算法

shared-secret "mydrbdlab";

}

syncer {

rate 500M; //傳輸速率限制

}

}

#

resource web {

on node1.magedu.com {

device /dev/drbd0;

disk /dev/sda5;

address 172.16.13.13:7789;

meta-disk internal;

}

on node2.magedu.com {

device /dev/drbd0;

disk /dev/sda5;

address 172.16.13.14:7789;

meta-disk internal;

}

}4.啓動服務

scp /etc/drbd.d/* node2:/etc/drbd.d //配置文件複製給node2 service drbd start; ssh node2 'service drbd start' //兩節點同時啓動服務 drbd-overview //查看服務狀態 drbdadm primary --force web //將當前節點強制設置爲主節點 drbd-overview

5.掛載文件系統

mke2fs -t ext4 -L DRBD /dev/drbd0 mkdir /mnt/drbd mount /dev/drbd0/mnt/drbd

6.測試

node1

touch /mnt/drbd/{a,b,c}

umount /mnt/drbd

drbdadm secondary web //將node1撤銷爲備用節點node2

mkdir /mnt/drbd mount /dev/drbd0/mnt/drbd drbdadm primary web drbd-overview ls /mnt/drbd //查看目錄下有沒有a,b,c文件,有的話表示成功

四、安裝配置corosync+pacemaker+crmsh

1.安裝配置corosync

yum install corosync pacemaker -y

rpm -ql corosync

cd /etc/corosync

cp corosync.conf.example corosync.conf

vim corosync.conf

compatibility withetank 兼容性選項

toten {

secauth:on //安全認證功能開啓

threads:0 //多線程

interface{

ringnumber:0 //心跳回傳功能,一般不需要

bindnetaddr:172.16.0.0 //給網絡地址

mcastaddr:239.165.17.13 //多播地址

mcastport: 5405

ttl:1

}

}

loggging {

fileline:off //

to_stderr:no //日誌發往標準錯誤輸出

to_logfile:yes

logfile:/var/log/cluster/corosync.log

to_syslog:no

debug:off

timestamp:on //日誌記錄時間戳

logger_subsys {

subsys:AME

debug:off

}

}service { //將pacemaker用作corosync的插件來運行

ver: 0

name: pacemaker

# use_mgmtd: yes

}

aisexec {

user: root

group: root

}corosync-keygen //會生成密碼文件authkey scp -p authkey corosync.conf node2:/etc/corosync service corosync start; ssh node2 'service corosync start' ss -tunl //查看5405是不是監聽 tail -f /var/log/cluster/sorosync.log grep pcmk_startup /var/log/cluster/corosync.log //查看pacemaker是否正常啓動

2.安裝crmsh

crmsh-2.1-1.6.x86_64.rpm //準備安裝包 pssh-2.3.1-2.e16.x86_64.rpm yum --nogpgcheck install crmsh-2.1-1.6.x86_64.rpm pssh-2.3.1-2.e16.x86_64.rpm crm status //查看集羣狀態

五、安裝MariaDB

1.node1

mariadb-5.5.43-linux-x86_64.tar.gz //安裝包 tar xf mariadb-5.5.43-linux-x86_64.tar.gz -C /usr/local cd /usr/local/ ln -sv mariadb-5.5.43-linux-x86_64/ mysql cd mysql/ cp support-files/my-large.cnf /etc/my.cnf vim /etc/my.cnf //編輯mysql配置文件 >thread_concurrency = 4 >datadir = /mnt/drbd >innodb_file_per_table = on groupadd -r -g 306 mysql //添加mysql用戶 useradd -r-u 306 -g 306 mysql scp /etc/my.cnf node2:/etc/ //將配置文件複製到node2一份 vim /etc/drbd.d/web.res service drbd start; ssh node1 'service drbd start' //開啓DRBD drbdadm primary web drbd-overview mount /dev/drbd0 /mnt/drbd //掛載drbd目錄 ./scripts/mysql_install_db --datadir=/mnt/drbd/ --user=mysql //初始化mysql cd /usr/local/mysql/ cp support-files/mysql.server /etc/rc.d/init.d/mysqld vim /etc/profile.d/mysql.sh export PATH=/usr/local/mysql/bin:$:PATH //補全路徑 . /etc/profile.d/mysql.sh service mysqld start mysql //測試mysql chkconfig --add mysqld chkconfig --list mysqld chkconfig mysqld off //確保mysqld不能開機自啓動 service mysqld stop umount /mnt/drbd/ drbdadm secondary web

2.node2

mariadb-5.5.43-linux-x86_64.tar.gz //安裝包 tar xf mariadb-5.5.43-linux-x86_64.tar.gz -C /usr/local cd /usr/local/ ln -sv mariadb-5.5.43-linux-x86_64/ mysql service drbd start; ssh node1 'service drbd start' drbdadm primary web mount /dev/drbd0 /mnt/drbd cd /usr/local/mysql/ cp support-files/mysql.server /etc/rc.d/init.d/mysqld vim /etc/profile.d/mysql.sh export PATH=/usr/local/mysql/bin:$:PATH . /etc/profile.d/mysql.sh service mysqld start mysql //測試mysql chkconfig --add mysqld chkconfig mysqld off service mysqld stop umount /mnt/drbd/ drbdadm secondary web //降級爲次節點,停止drbd服務 service drbd stop

六、定義DRBD資源,實現高可用

crm configrue show //配置文件如下 node node1.wuhf.com \ attributes standby=off node node2.wuhf.com \ attributes standby=on primitive mydata Filesystem \ //定義文件系統資源 params device="/dev/drbd0" directory="/mnt/drbd" fstype=ext4 \ op monitor interval=20s timeout=40s \ op start timeout=60s interval=0 \ op stop timeout=60s interval=0 primitive myip IPaddr \ //定義VIP資源 params ip=172.16.13.209 \ op monitor interval=10s timeout=20s primitive myserver lsb:mysqld \ //定義mysql服務資源 op monitor interval=20s timeout=20s primitive mystor ocf:linbit:drbd \ //定義drbd資源 params drbd_resource=web \ op monitor role=Master interval=10s timeout=20s \ op monitor role=Slave interval=20s timeout=20s \ op start timeout=240s interval=0 \ op stop timeout=100s interval=0 ms ms_mystor mystor \ //定義主備模式的兩節點工作方式 meta clone-max=2 clone-node-max=1 master-max=1 master-node-max=1 notify=truetarget-role=Started colocation mydata_with_ms_mystor_master inf: mydata ms_mystor:Master //定義三個排列 colocation myip_with_ms_mystor_master inf: myip ms_mystor:Master colocation myserver_with_mydata inf: myserver mydata order mydata_after_ms_mystor_master Mandatory: ms_mystor:promote mydata:start //定義順序 order myserver_after_mydata Mandatory: mydata:start myserver:start order myserver_after_myip Mandatory: myip:start myserver:start property cib-bootstrap-options: \ dc-version=1.1.11-97629de \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes=2 \ stonith-enabled=false \ //爆頭功能關閉 no-quorum-policy=ignore \ //集羣分裂處理方式爲忽略

七、測試

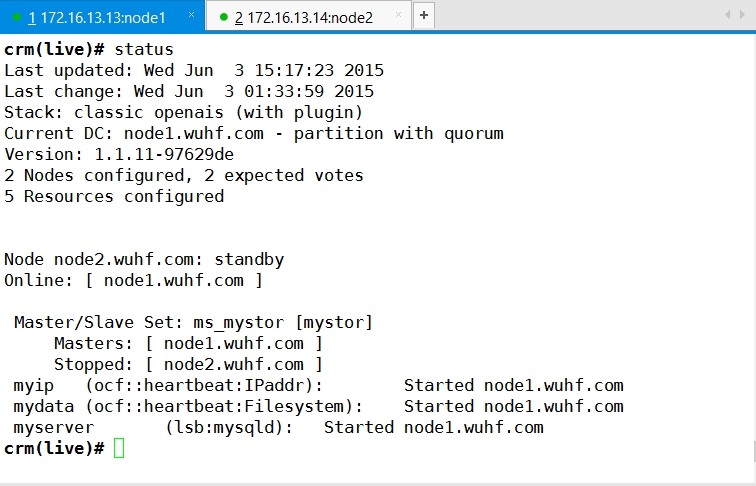

1.查看當前各節點狀態

當前node2是standby狀態,所有資源運行在node1上

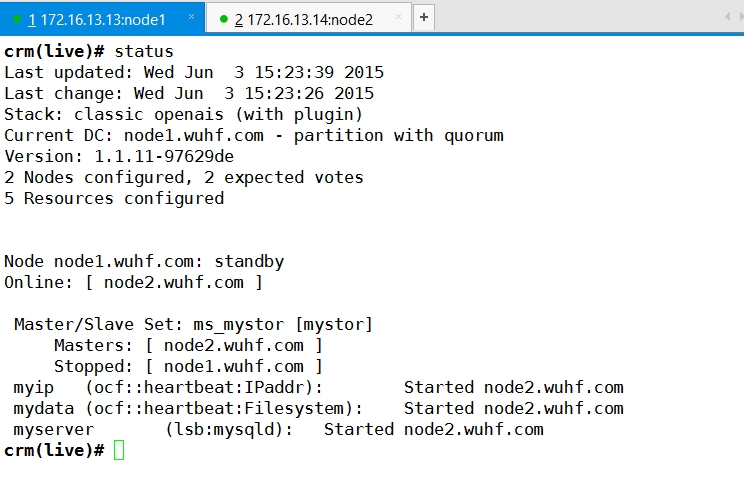

2.將node2上線,將node1下線

在node2上執行crm node online

在node1上執行crm node standby

查看可見,所有資源成功轉移到node2上運行,一切正常,表示高可用mysql基於drbd成功了。