tf.nn.conv2d(

input,

filter,

strides,

padding,

use_cudnn_on_gpu=True,

data_format='NHWC',

dilations=[1, 1, 1, 1],

name=None

)計算給定4d輸入和過濾核張量的二維卷積。

給定形狀[batch,in_height, in_width, in_channels]的輸入張量和形狀[filter_height, filter_width, in_channels, out_channels]的篩選/核張量,此op執行如下操作:

將過濾核壓扁到一個二維矩陣,形狀爲[filter_height filter_width in_channels, output_channels]。

從輸入張量中提取圖像塊,形成一個形狀的虛擬張量[batch, out_height, out_width, filter_height filter_width in_channels]。

對於每個patch,右乘濾波器矩陣和圖像patch向量。

output[b, i, j, k] =

sum_{di, dj, q} input[b, strides[1] * i + di, strides[2] * j + dj, q] *

filter[di, dj, q, k]必須有strides(步長)[0]=strides[3]= 1。對於相同水平和頂點的最常見情況,stride = [1, stride, stride, 1]。

Args:

input:一個張量。必須是以下類型之一:half,bfloat16, float32, float64。一個四維張量。dimension順序是根據data_format的值來解釋的。

filter: 必須具有與輸入相同的類型。形狀的4維張量[filter_height, filter_width, in_channels, out_channels]。

strides: int型列表。長度爲4的一維張量。每個輸入維度的滑動窗口的跨步。順序是根據data_format的值來解釋的。

padding: 來自:“SAME”、“VALID”的字符串。要使用的填充算法的類型。

use_cudnn_on_gpu: 可選bool,默認爲True.

data_format: 一個可選的字符串:“NHWC”、“NCHW”。默認爲“NHWC”。指定輸入和輸出數據的數據格式。使用默認格式“NHWC”,數據按以下順序存儲:[批處理、高度、寬度、通道]。或者,格式可以是“NCHW”,數據存儲順序爲:[批處理,通道,高度,寬度]。

dilations:int的可選列表。默認爲[1,1,1,1]。長度爲4的一維張量。每個輸入維度的膨脹係數。如果設置爲k > 1,則該維度上的每個過濾器元素之間將有k-1跳過單元格。維度順序由data_format的值決定,詳細信息請參閱上面的內容。批次的膨脹和深度尺寸必須爲1。

name: 可選 名字

#!/usr/bin/env python2

# -*- coding: utf-8 -*-

"""

Created on Tue Oct 2 13:23:27 2018

@author: myhaspl

@email:[email protected]

tf.nn.conv2d

"""

import tensorflow as tf

g=tf.Graph()

with g.as_default():

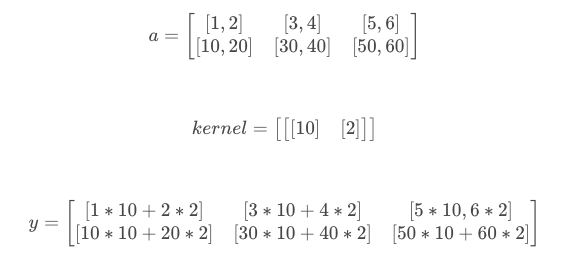

x=tf.constant([[

[[1.,2.],[3.,4.],[5.,6.]],

[[10.,20.],[30.,40.],[50.,60.]],

]])

kernel=tf.constant([[[[10.],[2.]]]])

y=tf.nn.conv2d(x,kernel,strides=[1,1,1,1],padding="SAME")

with tf.Session(graph=g) as sess:

print sess.run(x)

print sess.run(kernel)

print kernel.get_shape()

print x.get_shape()

print sess.run(y)[[[[ 1. 2.]

[ 3. 4.]

[ 5. 6.]]

[[10. 20.]

[30. 40.]

[50. 60.]]]]

[[[[10.]

[ 2.]]]]

(1, 1, 2, 1)

(1, 2, 3, 2)

[[[[ 14.]

[ 38.]

[ 62.]]

[[140.]

[380.]

[620.]]]]

import tensorflow as tf

a = tf.constant([1,1,1,0,0,0,1,1,1,0,0,0,1,1,1,0,0,1,1,0,0,1,1,0,0],dtype=tf.float32,shape=[1,5,5,1])

b = tf.constant([1,0,1,0,1,0,1,0,1],dtype=tf.float32,shape=[3,3,1,1])

c = tf.nn.conv2d(a,b,strides=[1, 2, 2, 1],padding='VALID')

d = tf.nn.conv2d(a,b,strides=[1, 2, 2, 1],padding='SAME')

with tf.Session() as sess:

print ("c shape:")

print (c.shape)

print ("c value:")

print (sess.run(c))

print ("d shape:")

print (d.shape)

print ("d value:")

print (sess.run(d))conv2d(

input,

filter,

strides,

padding,

use_cudnn_on_gpu=True,

data_format='NHWC',

name=None

)

c shape:

(1, 2, 2, 1)

c value:

[[[[ 4.]

[ 4.]]

[[ 2.]

[ 4.]]]]

d shape:

(1, 3, 3, 1)

d value:

[[[[ 2.]

[ 3.]

[ 1.]]

[[ 1.]

[ 4.]

[ 3.]]

[[ 0.]

[ 2.]

[ 1.]]]]padding爲VALID,採用丟棄的方式。

padding爲SAME,採用的是補全的方式,補0

#!/usr/bin/env python2

# -*- coding: utf-8 -*-

"""

Created on Tue Oct 2 13:23:27 2018

@author: myhaspl

@email:[email protected]

tf.nn.conv2d

"""

import tensorflow as tf

g=tf.Graph()

with g.as_default():

x=tf.constant([

[

[[1.],[2.],[11.]],

[[3.],[4.],[22.]],

[[5.],[6.],[33.]]

],

[

[[10.],[20.],[44.]],

[[30.],[40.],[55.]],

[[50.],[60.],[66.]]

]

])#2*3*3*1

kernel=tf.constant(

[

[[[2.]],[[3.]]]

]

)#2*2*1

y=tf.nn.conv2d(x,kernel,strides=[1,1,1,1],padding="SAME")

with tf.Session(graph=g) as sess:

print x.get_shape()

print kernel.get_shape()

print sess.run(y)

print y.get_shape()padding="SAME"

$12+23=8$

$22+311=37$

$112+03=22$

(2, 3, 3, 1)

(1, 2, 1, 1)

[[[[ 8.]

[ 37.]

[ 22.]]

[[ 18.]

[ 74.]

[ 44.]]

[[ 28.]

[111.]

[ 66.]]]

[[[ 80.]

[172.]

[ 88.]]

[[180.]

[245.]

[110.]]

[[280.]

[318.]

[132.]]]]

(2, 3, 3, 1)