內容:(會在後期繼續整理擴充該部分內容;歡迎探討)

1. LVM基本創建及管理

2. LVM快照

3. LVM與RAID的結合使用:基於RAID的LVM

LVM創建:

描述:

LVM是邏輯盤卷管理(LogicalVolumeManager)的簡稱,它是Linux環境下對磁盤分區進行管理的一種機制,LVM是建立在硬盤和分區之上的一個邏輯層,來提高磁盤分區管理的靈活性.

通過創建LVM,我們可以更輕鬆的管理磁盤分區,將若干個不同大小的不同形式的磁盤整合爲一個整塊的卷組,然後在卷組上隨意的創建邏輯卷,既避免了大量不同規格硬盤的管理難題,也使邏輯卷容量的擴充縮減不再受限於磁盤規格;並且LVM的snapshot(快照)功能給數據的物理備份提供了便捷可靠的方式;

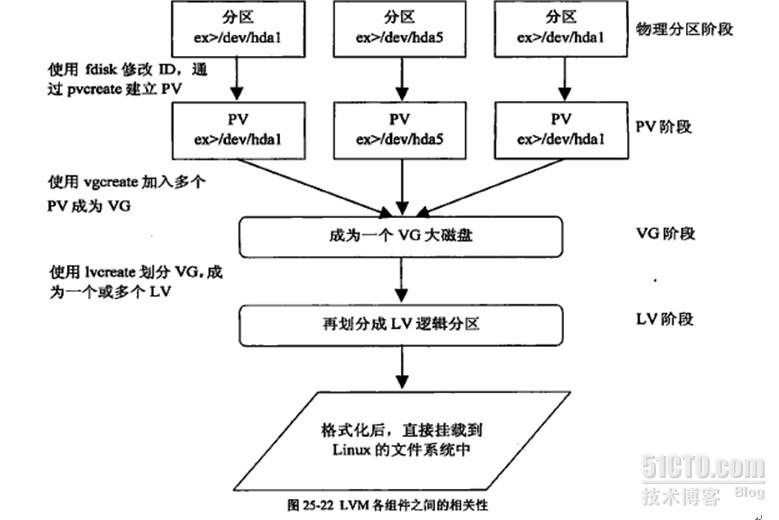

創建LVM過程;(如圖)

1. 在物理設備上創建物理分區,每個物理分區稱爲一個PE

2. 使用fdisk工具創建物理分區卷標(修改爲8e),形成PV(Physical Volume 物理卷)

3. 使用vgcreate 將多個PV添加到一個VG(Volume Group 卷組)中,此時VG成爲一個大磁盤;

4. 在VG大磁盤上劃分LV(Logical Volume 邏輯卷),將邏輯卷格式化後即可掛載使用;

各階段可用的命令工具:(詳細選項信息請be a man)

|

階段 |

顯示信息 |

創建 |

刪除組員 |

擴大大小 |

縮減大小 |

|

PV |

pvdisplay |

pvcreat |

pvremove |

----- |

----- |

|

VG |

vgdisplay |

vgcreat |

vgremove |

vgextend |

vgreduce |

|

LV |

lvdispaly |

lvcreat |

lvremove |

lvextend |

lvreduce |

創建示例:

1. 創建PV

- Device Boot Start End Blocks Id System

- /dev/sdb1 1 609 4891761 8e Linux LVM

- /dev/sdc1 1 609 4891761 8e Linux LVM

- /dev/sdd1 1 609 4891761 8e Linux LVM

- [root@bogon ~]# pvcreate /dev/sd[bcd]1

- Physical volume "/dev/sdb1" successfully created

- Physical volume "/dev/sdc1" successfully created

- Physical volume "/dev/sdd1" successfully created

查看PV信息:

- [root@bogon ~]# pvdisplay

- --- Physical volume ---

- PV Name /dev/sda2

- VG Name vol0

- PV Size 40.00 GB / not usable 2.61 MB

- Allocatable yes

- PE Size (KByte) 32768

- Total PE 1280

- Free PE 281

- Allocated PE 999

- PV UUID GxfWc2-hzKw-tP1E-8cSU-kkqY-z15Z-11Gacd

- "/dev/sdb1" is a new physical volume of "4.67 GB"

- --- NEW Physical volume ---

- PV Name /dev/sdb1

- VG Name

- PV Size 4.67 GB

- Allocatable NO

- PE Size (KByte) 0

- Total PE 0

- Free PE 0

- Allocated PE 0

- PV UUID 1rrc9i-05Om-Wzd6-dM9G-bo08-2oJj-WjRjLg

- "/dev/sdc1" is a new physical volume of "4.67 GB"

- --- NEW Physical volume ---

- PV Name /dev/sdc1

- VG Name

- PV Size 4.67 GB

- Allocatable NO

- PE Size (KByte) 0

- Total PE 0

- Free PE 0

- Allocated PE 0

- PV UUID RCGft6-l7tj-vuBX-bnds-xbLn-PE32-mCSeE8

- "/dev/sdd1" is a new physical volume of "4.67 GB"

- --- NEW Physical volume ---

- PV Name /dev/sdd1

- VG Name

- PV Size 4.67 GB

- Allocatable NO

- PE Size (KByte) 0

- Total PE 0

- Free PE 0

- Allocated PE 0

- PV UUID SLiAAp-43zX-6BC8-wVzP-6vQu-uyYF-ugdWbD

2. 創建VG

- [[root@bogon ~]# vgcreate myvg -s 16M /dev/sd[bcd]1

- Volume group "myvg" successfully created

- ##-s 在創建時指定PE塊的大小,默認是4M。

- 查看系統上VG狀態

- [root@bogon ~]# vgdisplay

- --- Volume group ---

- VG Name myvg

- System ID

- Format lvm2

- Metadata Areas 3

- Metadata Sequence No 1

- VG Access read/write

- VG Status resizable

- MAX LV 0

- Cur LV 0

- Open LV 0

- Max PV 0

- Cur PV 3

- Act PV 3

- VG Size 13.97 GB ##VG總大小

- PE Size 16.00 MB ##默認的PE塊大小是4M

- Total PE 894 ##總PE塊數

- Alloc PE / Size 0 / 0 ##已經使用的PE塊數目

- Free PE / Size 894 / 13.97 GB ##可用的PE數目及磁盤大小

- VG UUID RJC6Z1-N2Jx-2Zjz-26m6-LLoB-PcWQ-FXx3lV

3. 在VG中劃分出LV:

- [root@bogon ~]# lvcreate -L 256M -n data1 myvg

- Logical volume "data1" created

- ## -L指定LV大小

- ## -n 指定lv卷名稱

- [root@bogon ~]# lvcreate -l 20 -n test myvg

- Logical volume "test" created

- ## -l 指定LV大小佔用多少個PE塊;上面大小爲:20*16M=320M

- [root@bogon ~]# lvdisplay

- --- Logical volume ---

- LV Name /dev/myvg/data

- VG Name myvg

- LV UUID d0SYy1-DQ9T-Sj0R-uPeD-xn0z-raDU-g9lLeK

- LV Write Access read/write

- LV Status available

- # open 0

- LV Size 256.00 MB

- Current LE 16

- Segments 1

- Allocation inherit

- Read ahead sectors auto

- - currently set to 256

- Block device 253:2

- --- Logical volume ---

- LV Name /dev/myvg/test

- VG Name myvg

- LV UUID os4UiH-5QAG-HqOJ-DoNT-mVeT-oYyy-s1xArV

- LV Write Access read/write

- LV Status available

- # open 0

- LV Size 320.00 MB

- Current LE 20

- Segments 1

- Allocation inherit

- Read ahead sectors auto

- - currently set to 256

- Block device 253:3

4. 然後就可以格式化LV,掛載使用:

- [root@bogon ~]# mkfs -t ext3 -b 2048 -L DATA /dev/myvg/data

- [root@bogon ~]# mount /dev/myvg/data /data/

- ## 拷貝進去一些文件,測試後面在線擴展及縮減效果:

- [root@bogon data]# cp /etc/* /data/

5. 擴大LV容量:(2.6kernel+ext3filesystem)

lvextend命令可以增長邏輯卷

resize2fs可以增長filesystem在線或非在線

(我的系統內核2.6.18,ext3文件系統,查man文檔,現在只有2.6的內核+ext3文件系統纔可以在線增容量)

首先邏輯擴展:

- [root@bogon data]# lvextend -L 500M /dev/myvg/data

- Rounding up size to full physical extent 512.00 MB

- Extending logical volume data to 512.00 MB

- Logical volume data successfully resized

- ##-L 500M :指擴展到500M,系統此時會找最近的柱面進行匹配;

- ##-L +500M :值在原有大小的基礎上擴大500M;

- ##-l [+]50 類似上面,但是以Pe塊爲單位進行擴展;

然後文件系統物理擴展:

- [root@bogon data]# resize2fs -p /dev/myvg/data

- resize2fs 1.39 (29-May-2006)

- Filesystem at /dev/myvg/data is mounted on /data; on-line resizing required

- Performing an on-line resize of /dev/myvg/data to 262144 (2k) blocks.

- The filesystem on /dev/myvg/data is now 262144 blocks long.

- ##據上面信息,系統自動識別並進行了在線擴展;

- 查看狀態:

- [root@bogon ~]# lvdisplay

- --- Logical volume ---

- LV Name /dev/myvg/data

- VG Name myvg

- LV UUID d0SYy1-DQ9T-Sj0R-uPeD-xn0z-raDU-g9lLeK

- LV Write Access read/write

- LV Status available

- # open 1

- LV Size 512.00 MB

- Current LE 32

- Segments 1

- Allocation inherit

- Read ahead sectors auto

- - currently set to 256

- Block device 253:2

- ##此時查看掛載目錄,文件應該完好;

6. 縮減LV容量:

縮減容量是一件危險的操作;縮減必須在離線狀態下執行;並且必須先強制檢查文件系統錯誤,防止縮減過程損壞數據;

- [root@bogon ~]# umount /dev/myvg/data

- [root@bogon ~]# e2fsck -f /dev/myvg/data

- e2fsck 1.39 (29-May-2006)

- Pass 1: Checking inodes, blocks, and sizes

- Pass 2: Checking directory structure

- Pass 3: Checking directory connectivity

- Pass 4: Checking reference counts

- Pass 5: Checking group summary information

- DATA: 13/286720 files (7.7% non-contiguous), 23141/573440 blocks

先縮減物理大小:

- [root@bogon ~]# resize2fs /dev/myvg/data 256M

- resize2fs 1.39 (29-May-2006)

- Resizing the filesystem on /dev/myvg/data to 131072 (2k) blocks.

- The filesystem on /dev/myvg/data is now 131072 blocks long.

再縮減邏輯大小:

- [root@bogon ~]# lvreduce -L 256M /dev/myvg/data

- WARNING: Reducing active logical volume to 256.00 MB

- THIS MAY DESTROY YOUR DATA (filesystem etc.)

- Do you really want to reduce data? [y/n]: y

- Reducing logical volume data to 256.00 MB

- Logical volume data successfully resized

查看狀態、重新掛載:

- [root@bogon ~]# lvdisplay

- --- Logical volume ---

- LV Name /dev/myvg/data

- VG Name myvg

- LV UUID d0SYy1-DQ9T-Sj0R-uPeD-xn0z-raDU-g9lLeK

- LV Write Access read/write

- LV Status available

- # open 0

- LV Size 256.00 MB

- Current LE 16

- Segments 1

- Allocation inherit

- Read ahead sectors auto

- - currently set to 256

- Block device 253:2

- 重新掛載:

- [root@bogon ~]# mount /dev/myvg/data /data/

- [root@bogon data]# df /data/

- Filesystem 1K-blocks Used Available Use% Mounted on

- /dev/mapper/myvg-data

- 253900 9508 236528 4% /data

7. 擴展VG,向VG中添加一個PV:

- [root@bogon data]# pvcreate /dev/sdc2

- Physical volume "/dev/sdc2" successfully created

- [root@bogon data]# vgextend myvg /dev/sdc2

- Volume group "myvg" successfully extended

- [root@bogon data]# pvdisplay

- --- Physical volume ---

- PV Name /dev/sdc2

- VG Name myvg

- PV Size 4.67 GB / not usable 9.14 MB

- Allocatable yes

- PE Size (KByte) 16384

- Total PE 298

- Free PE 298

- Allocated PE 0

- PV UUID hrveTu-2JUH-aSgT-GKAJ-VVv2-Hit0-PyoOOr

8. 縮減VG,取出VG中的某個PV:

移除某個PV時,需要先轉移該PV上數據到其他PV,然後再將該PV刪除;

移出指定PV中的數據:

- [root@bogon data]# pvmove /dev/sdc2

- No data to move for myvg

- ##如果sdc2上面有數據,則會花一段時間移動,並且顯示警告信息,再次確認後纔會執行;

- ##如上,提示該分區中沒有數據;

移除PV:

- [root@bogon data]# vgreduce myvg /dev/sdc2

- Removed "/dev/sdc2" from volume group "myvg"

- ##若發現LVM中磁盤工作不太正常,懷疑是某一塊磁盤工作由問題後就可以用該方法移出問題盤

- ##上數據,然後刪掉問題盤;

LVM快照:

描述:

在一個非常繁忙的服務器上,備份大量的數據時,需要停掉大量的服務,否則備份下來的數據極容易出現不一致狀態,而使備份根本不能起效;這時快照就起作用了;

原理:

邏輯卷快照實質是訪問原始數據的另外一個路徑而已;快照保存的是做快照那一刻的數據狀態;做快照以後,任何對原始數據的修改,會在修改前拷貝一份到快照區域,所以通過快照查看到的數據永遠是生成快照那一刻的數據狀態;但是對於快照大小有限制,做快照前需要估算在一定時間內數據修改量大小,如果在創建快照期間數據修改量大於快照大小了,數據會溢出照成快照失效崩潰;

快照不是永久的。如果你卸下LVM或重啓,它們就丟失了,需要重新創建。

創建快照:

- [root@bogon ~]# lvcreate -L 500M -p r -s -n datasnap /dev/myvg/data

- Rounding up size to full physical extent 512.00 MB

- Logical volume "datasnap" created

- ## -L –l 設置大小

- ## -p :permission,設置生成快照的讀寫權限,默認爲RW;r爲只讀

- ##-s 指定lvcreate生成的是一個快照

- ##-n 指定快照名稱

- 掛載快照到指定位置:

- [root@bogon ~]# mount /dev/myvg/datasnap /backup/

- mount: block device /dev/myvg/datasnap is write-protected, mounting read-only

- 然後備份出快照中文件即可,備份後及時刪除快照:

- [root@bogon ~]# ls /backup/

- inittab lost+found

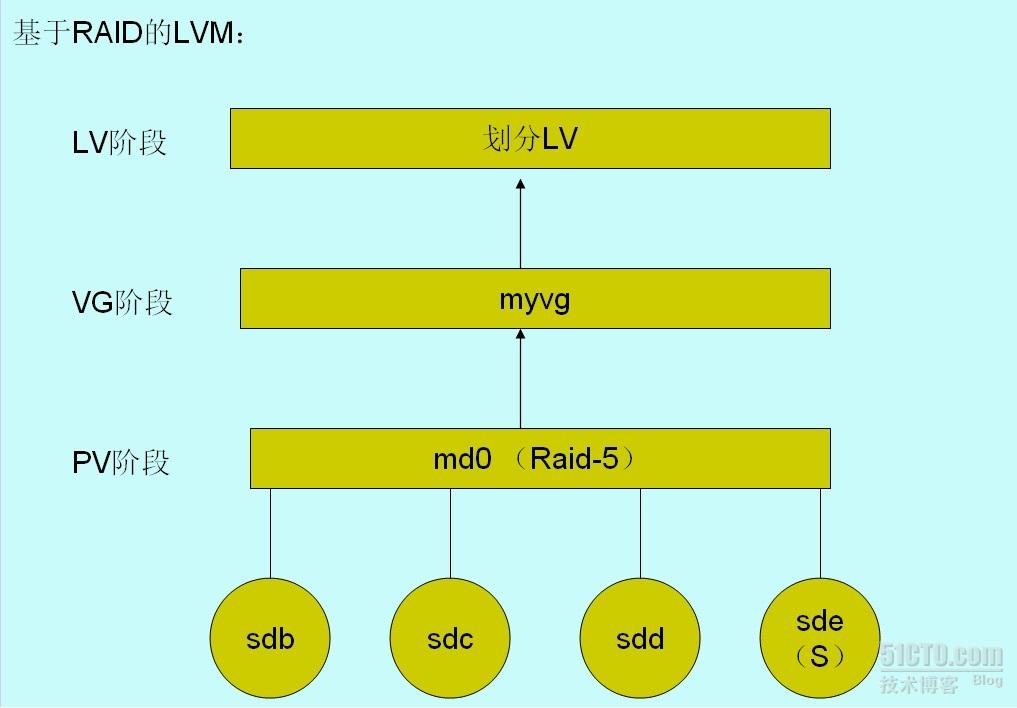

基於RAID的LVM的建立:

描述:

基於RAID的LVM,可以在底層實現RAID對數據的冗餘或是提高讀寫性能的基礎上,可以在上層實現LVM的可以靈活管理磁盤的功能;

如圖:

建立過程:

1. 建立LinuxRAID形式的分區:

- Device Boot Start End Blocks Id System

- /dev/sdb1 1 62 497983+ fd Linux raid autodetect

- /dev/sdc1 1 62 497983+ fd Linux raid autodetect

- /dev/sdd1 1 62 497983+ fd Linux raid autodetect

- /dev/sde1 1 62 497983+ fd Linux raid autodetect

2. 創建RAID-5:

- [root@nod1 ~]# mdadm -C /dev/md0 -a yes -l 5 -n 3 -x 1 /dev/sd{b,c,d,e}1

- mdadm: array /dev/md0 started.

- # RAID-5有一個spare盤,三個活動盤。

- # 查看狀態,發現跟創建要求一致,且創建正在進行中:

- [root@nod1 ~]# cat /proc/mdstat

- Personalities : [raid6] [raid5] [raid4]

- md0 : active raid5 sdd1[4] sde1[3](S) sdc1[1] sdb1[0]

- 995712 blocks level 5, 64k chunk, algorithm 2 [3/2] [UU_]

- [=======>.............] recovery = 36.9% (184580/497856) finish=0.2min speed=18458K/sec

- unused devices: <none>

- # 查看RAID詳細信息:

- [root@nod1 ~]# mdadm -D /dev/md0

- /dev/md0:

- Version : 0.90

- Creation Time : Wed Apr 6 04:27:46 2011

- Raid Level : raid5

- Array Size : 995712 (972.54 MiB 1019.61 MB)

- Used Dev Size : 497856 (486.27 MiB 509.80 MB)

- Raid Devices : 3

- Total Devices : 4

- Preferred Minor : 0

- Persistence : Superblock is persistent

- Update Time : Wed Apr 6 04:36:08 2011

- State : clean

- Active Devices : 3

- Working Devices : 4

- Failed Devices : 0

- Spare Devices : 1

- Layout : left-symmetric

- Chunk Size : 64K

- UUID : 5663fc8e:68f539ee:3a4040d6:ccdac92a

- Events : 0.4

- Number Major Minor RaidDevice State

- 0 8 17 0 active sync /dev/sdb1

- 1 8 33 1 active sync /dev/sdc1

- 2 8 49 2 active sync /dev/sdd1

- 3 8 65 - spare /dev/sde1

3. 建立RAID配置文件:

- [root@nod1 ~]# mdadm -Ds > /etc/mdadm.conf

- [root@nod1 ~]# cat /etc/mdadm.conf

- ARRAY /dev/md0 level=raid5 num-devices=3 metadata=0.90 spares=1 UUID=5663fc8e:68f539ee:3a4040d6:ccdac92a

4. 基於剛建立的RAID設備創建LVM:

- # 將md0創建成爲PV(物理卷):

- [root@nod1 ~]# pvcreate /dev/md0

- Physical volume "/dev/md0" successfully created

- # 查看物理卷:

- [root@nod1 ~]# pvdisplay

- "/dev/md0" is a new physical volume of "972.38 MB"

- --- NEW Physical volume ---

- PV Name /dev/md0

- VG Name #此時該PV不包含在任何VG中,故爲空;

- PV Size 972.38 MB

- Allocatable NO

- PE Size (KByte) 0

- Total PE 0

- Free PE 0

- Allocated PE 0

- PV UUID PUb3uj-ObES-TXsM-2oMS-exps-LPXP-jD218u

- # 創建VG

- [root@nod1 ~]# vgcreate myvg -s 32M /dev/md0

- Volume group "myvg" successfully created

- # 查看VG狀態:

- [root@nod1 ~]# vgdisplay

- --- Volume group ---

- VG Name myvg

- System ID

- Format lvm2

- Metadata Areas 1

- Metadata Sequence No 1

- VG Access read/write

- VG Status resizable

- MAX LV 0

- Cur LV 0

- Open LV 0

- Max PV 0

- Cur PV 1

- Act PV 1

- VG Size 960.00 MB

- PE Size 32.00 MB

- Total PE 30

- Alloc PE / Size 0 / 0

- Free PE / Size 30 / 960.00 MB

- VG UUID 9NKEWK-7jrv-zC2x-59xg-10Ai-qA1L-cfHXDj

- # 創建LV:

- [root@nod1 ~]# lvcreate -L 500M -n mydata myvg

- Rounding up size to full physical extent 512.00 MB

- Logical volume "mydata" created

- # 查看LV狀態:

- [root@nod1 ~]# lvdisplay

- --- Logical volume ---

- LV Name /dev/myvg/mydata

- VG Name myvg

- LV UUID KQQUJq-FU2C-E7lI-QJUp-xeVd-3OpA-TMgI1D

- LV Write Access read/write

- LV Status available

- # open 0

- LV Size 512.00 MB

- Current LE 16

- Segments 1

- Allocation inherit

- Read ahead sectors auto

- - currently set to 512

- Block device 253:2

問題:(實驗環境,很難做出判斷,望有經驗的多做指教)

在RAID上實現LVM後,不能再手動控制陣列的停止。

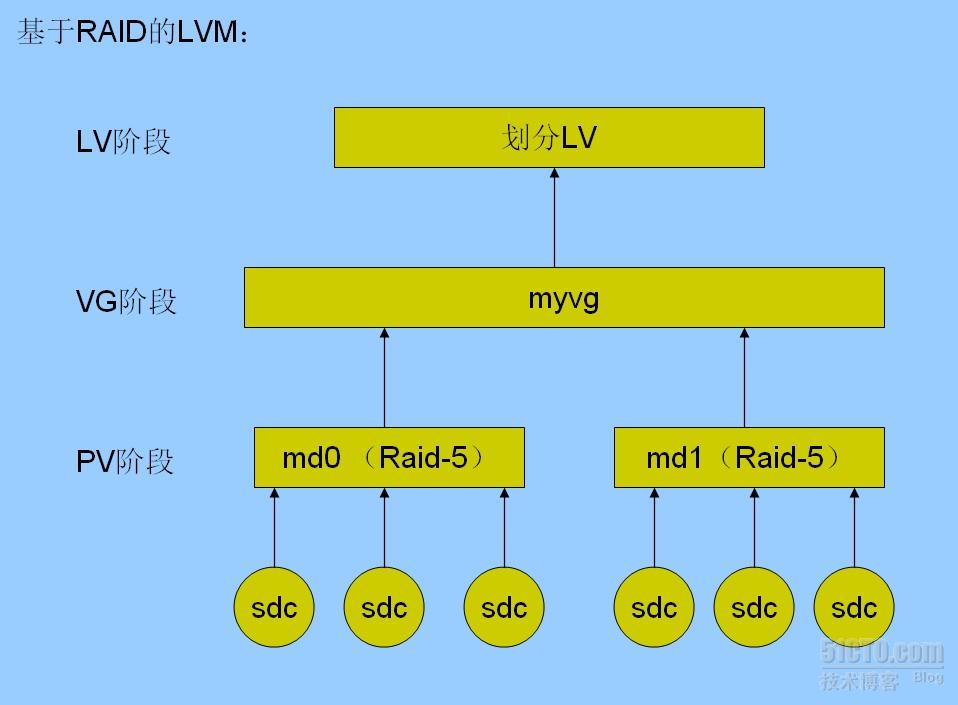

raid設備在建成以後,是不可以改變活動磁盤數目的。只能新增備份盤, 刪除故障盤。故,若對存儲需求估計失誤,raid上層的LVM分區即使可以擴展, 但是仍可能無法滿足存儲需求;

如果整個raid不能滿足容量需求,在試驗中是可以實現將兩個RAID同時加入 一個VG,從而實現RAID間的無縫銜接~但是這樣做在實際生產中效果怎麼樣?是 否會因爲架構太複雜造成系統負過大,或是對拖慢I/O響應速度?!這仍舊是個問 題;

基於RAID之上的LVM,若劃分多個LV,多個LV之間的數據分佈情況怎麼樣?! 會不會拖慢RAID本來帶來的性能提升?

RAID間的無縫銜接示意圖: