k8s中的容器一般是通過deployment管理的,那麼一次滾動升級理論上會更新所有pod,這由deployment資源特性保證的,但在實際的工作場景下,需要灰度發佈進行服務驗證,即只發布部分節點,這似乎與k8s的deployment原理相違背,但是灰度發佈的必要性,運維同學都非常清楚,如何解決這一問題?

最佳實踐:

定義兩個不同的deployment,例如:fop-gate和fop-gate-canary,但是管理的pod所使用的鏡像、配置文件全部相同,不同的是什麼呢?

答案是:replicas (灰度的fop-gate-canary的replicas是1,fop-gate的副本數是9)

cat deployment.yaml

apiVersion: apps/v1beta1

kind: Deployment

metadata:

{{if eq .system.SERVICE "fop-gate-canary"}}

name: fop-gate-canary

{{else if eq .system.SERVICE "fop-gate"}}

name: fop-gate

{{end}}

namespace: dora-apps

labels:

app: fop-gate

team: dora

type: basic

annotations:

log.qiniu.com/global.agent: "logexporter"

log.qiniu.com/global.version: "v2"

spec:

{{if eq .system.SERVICE "fop-gate-canary"}}

replicas: 1

{{else if eq .system.SERVICE "fop-gate"}}

replicas: 9

{{end}}

minReadySeconds: 30

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

template:

metadata:

labels:

app: fop-gate

team: dora

type: basic

spec:

terminationGracePeriodSeconds: 90

containers:

- name: fop-gate

image: reg.qiniu.com/dora-apps/fop-gate:20190218210538-6-master

...........

我們都知道, deployment 會爲自己創建的 pod 自動加一個 “pod-template-hash” label 來區分,也就是說,每個deployment只管理自己的pod,不會混亂,那麼此時endpoint列表中就會有fop-gate和fop-gate-canary的pod,其他服務調用fop-gate的時候就會同時把請求發到這10個pod上。

灰度發佈該怎麼做呢?

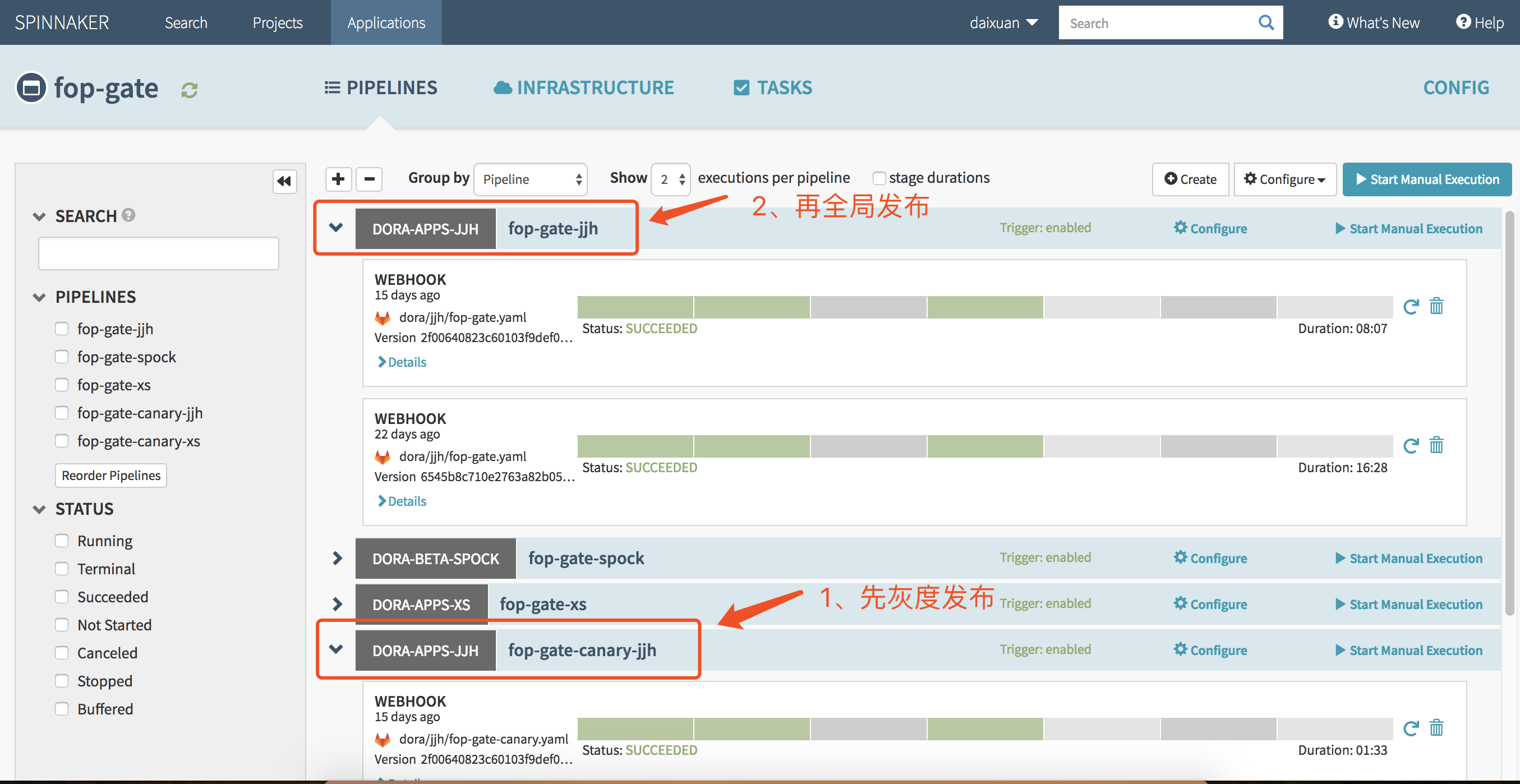

最佳實踐:創建兩個不同pipeline,先灰度發佈fop-gate-canary的pipeline,再全局發佈fop-gate的pipeline(這裏給出的是渲染前的配置文件,注意pipeline不同):

"fop-gate":

"templates":

- "dora/jjh/fop-gate/configmap.yaml"

- "dora/jjh/fop-gate/service.yaml"

- "dora/jjh/fop-gate/deployment.yaml"

- "dora/jjh/fop-gate/ingress.yaml"

- "dora/jjh/fop-gate/ingress_debug.yaml"

- "dora/jjh/fop-gate/log-applog-configmap.yaml"

- "dora/jjh/fop-gate/log-auditlog-configmap.yaml"

"pipeline": "569325e6-6d6e-45ca-b21e-24016a9ef326"

"fop-gate-canary":

"templates":

- "dora/jjh/fop-gate/configmap.yaml"

- "dora/jjh/fop-gate/service.yaml"

- "dora/jjh/fop-gate/deployment.yaml"

- "dora/jjh/fop-gate/ingress.yaml"

- "dora/jjh/fop-gate/log-applog-configmap.yaml"

- "dora/jjh/fop-gate/log-auditlog-configmap.yaml"

"pipeline": "15f7dd6a-bd01-41bc-bac5-8266d63fc3a5"注意發佈的先後順序:

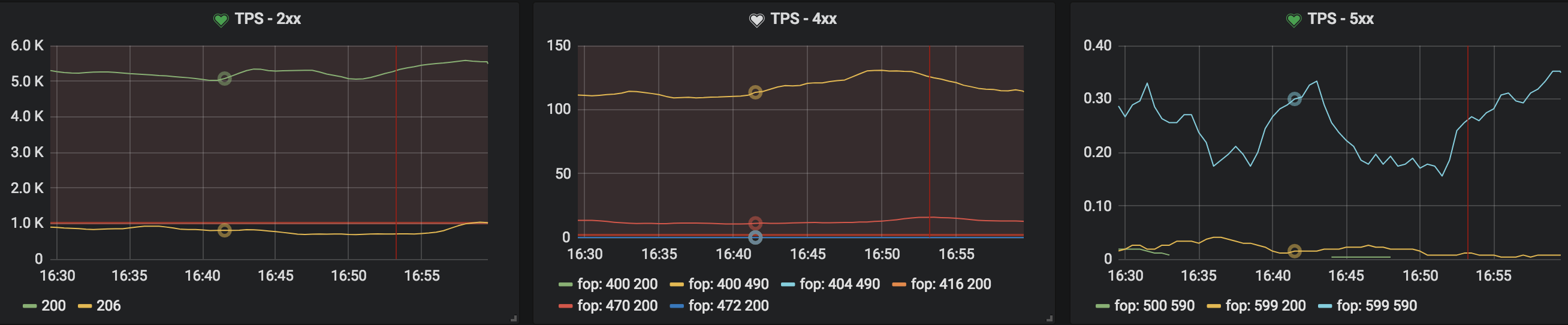

灰度發佈完成後,可以登陸pod查看日誌,並觀察相關的grafana監控,查看TPS2XX和TPS5XX的變化情況,再決定是否繼續發佈fop-gate,實現灰度發佈的目的

➜ dora git:(daixuan) ✗ kubectl get pod -o wide | grep fop-gate

fop-gate-685d66768b-5v6q4 2/2 Running 0 15d 172.20.122.161 jjh304 <none>

fop-gate-685d66768b-69c6q 2/2 Running 0 4d21h 172.20.129.52 jjh1565 <none>

fop-gate-685d66768b-79fhd 2/2 Running 0 15d 172.20.210.227 jjh219 <none>

fop-gate-685d66768b-f68zq 2/2 Running 0 15d 172.20.177.98 jjh322 <none>

fop-gate-685d66768b-k5l9s 2/2 Running 0 15d 172.20.189.147 jjh1681 <none>

fop-gate-685d66768b-m5n55 2/2 Running 0 15d 172.20.73.78 jjh586 <none>

fop-gate-685d66768b-rr7t6 2/2 Running 0 15d 172.20.218.225 jjh302 <none>

fop-gate-685d66768b-tqvp7 2/2 Running 0 15d 172.20.221.15 jjh592 <none>

fop-gate-685d66768b-xnqn7 2/2 Running 0 15d 172.20.133.80 jjh589 <none>

fop-gate-canary-7cb6dc676f-62n24 2/2 Running 0 15d 172.20.208.28 jjh574 <none>

➜ dora git:(daixuan) ✗ kubectl exec -it fop-gate-canary-7cb6dc676f-62n24 -c fop-gate bash

root@fop-gate-canary-7cb6dc676f-62n24:/# cd app/auditlog/

root@fop-gate-canary-7cb6dc676f-62n24:/app/auditlog# tail -n5 144 | awk -F'\t' '{print $8}'

200

200

200

200

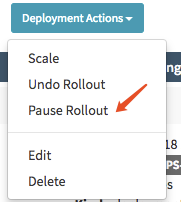

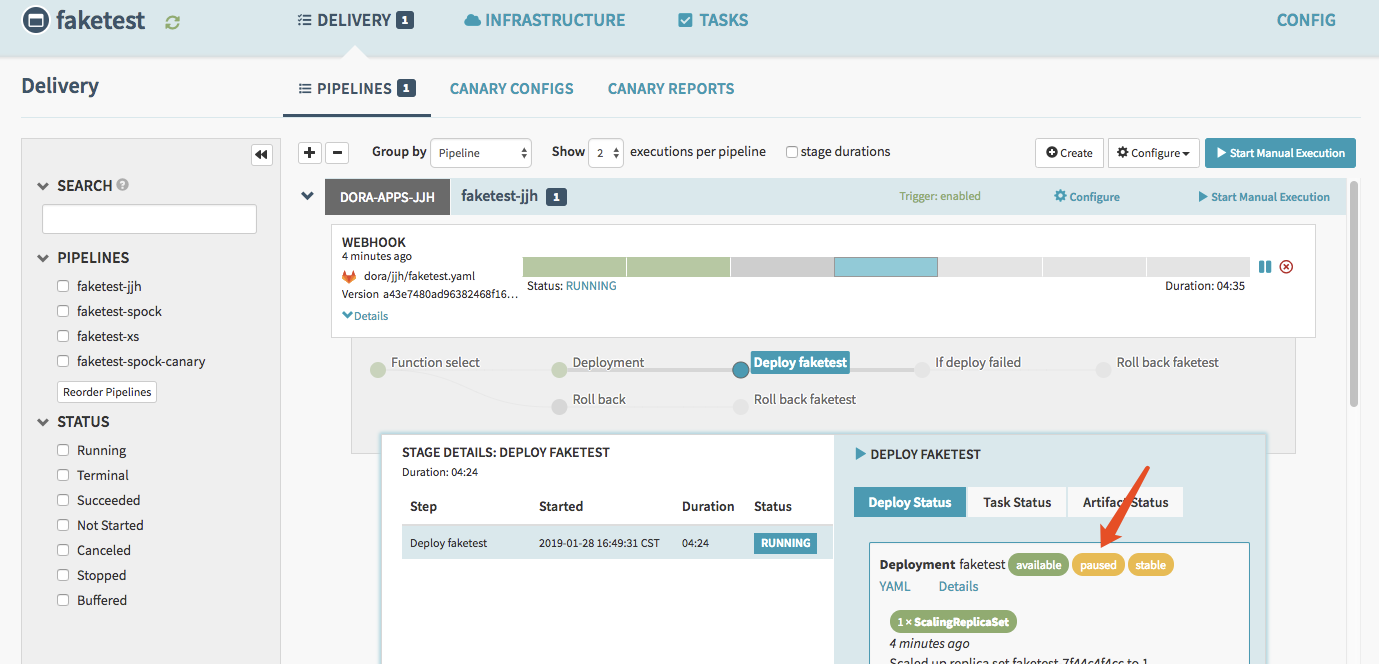

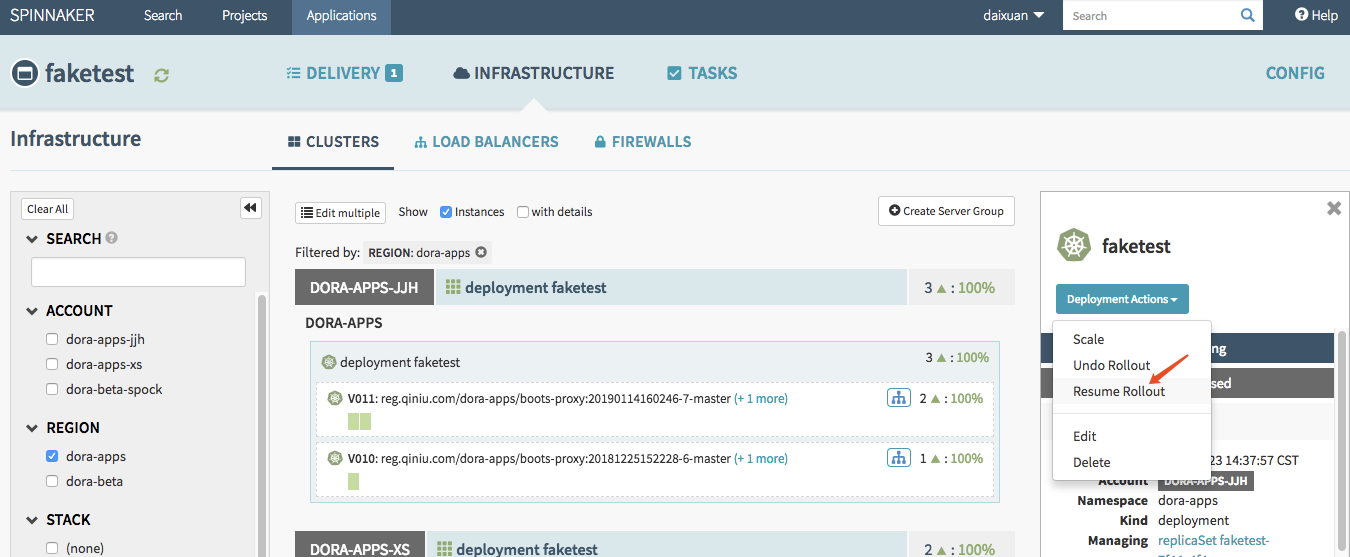

200此外,spinnaker具有發佈具有pause、resume、undo功能,實際測試可行

pause 暫停功能(類似於kubectl rollout pause XXX的功能)

resume恢復功能(類似於kubectl rollout resume XXX的功能)

undo取消功能(類似於kubectl rollout undo XXX功能)

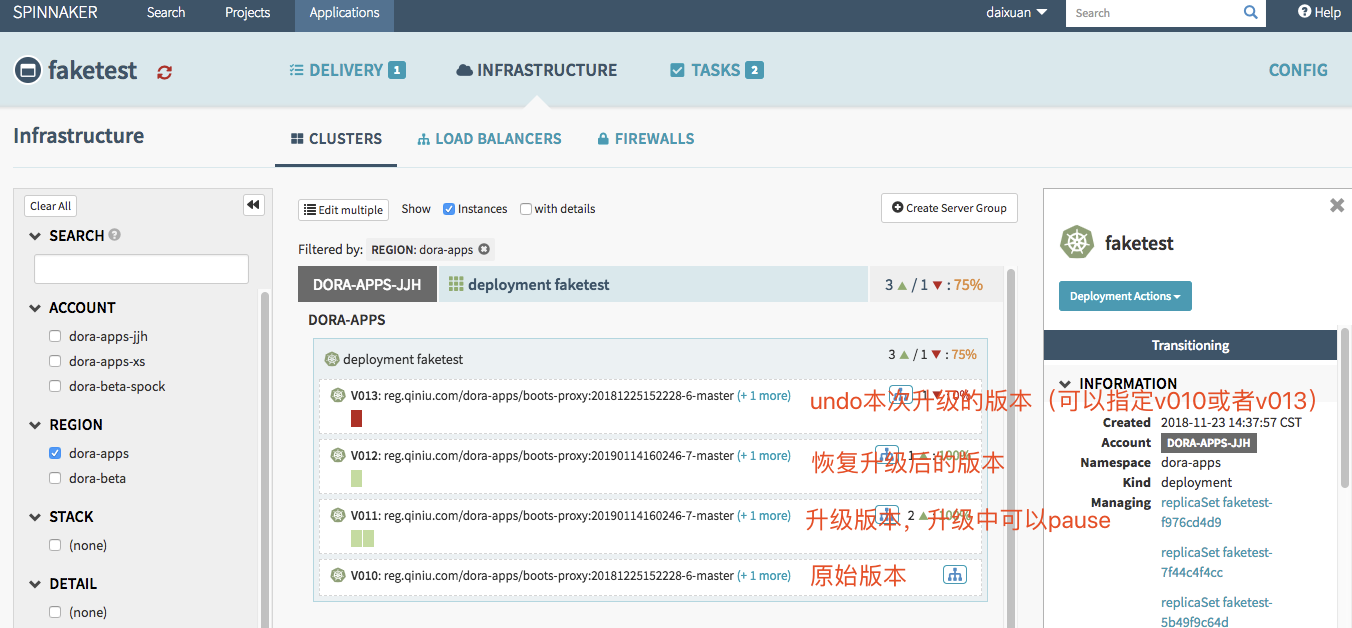

spinnaker的這幾種功能可以在正常發佈服務的過程中發現問題,及時暫停和恢復,注意,spinnaker取消發佈一定是針對正在發佈的操作,pause狀態中的發佈無法取消,這與kubectl操作一致

我們嘗試執行一次,發佈,暫停,恢復,取消 操作,整個過程會產生4個version,每次變動會對應一個新version,因爲不管是暫停還是恢復,在spinnaker中都將認爲是一次新的發佈,會更新version版本

總結:k8s中灰度發佈最好方法就是定義兩個不同的deployment管理相同類型的服務,創建不同的pipeline進行發佈管理,避免干擾,同時在正常發佈過程中,也可以利用spinnaker的pause,resume,undo等功能進行發佈控制。