Viola-Jones人臉檢測算法是第一個實時的人臉檢測算法。其影響力就不用多說了,即便是現在,該算法的應用仍然非常廣泛。衆所周知,Viola-Jones算法分爲三個部分,Harr特徵和積分圖,特徵選擇的AdaptBoost以及用於訓練的Cascade模型。對於Cascade模型,它更多的表示的是一種Strategy,這可以當作一個另外的類別了,這個類別可以看作算法的一種“細節”處理,不同的人對其有不同的看法。Cascade模型主要的目的是降低訓練時間,更重要的是使得分類器具有一般性,將重點放在Object和Object鄰域的那些樣本點。類似於這樣的Strategy還有像Kalal在他的博士論文TLD中提出的Sampling Strategy和另外的一種Cascade模型,都能夠有效的降低訓練時間及使分類器具有一般性,這樣的一些Strategy都屬於Bootstrapping,也就是訓練時用一些策略做出適當的引導,而不是盲目的訓練所有的樣本點。下面一起看看Harr怎麼回事吧。

Harr特徵和積分圖

1 理論

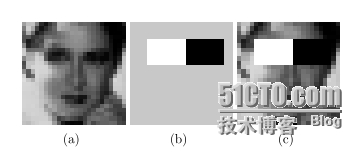

就我而言Harr特徵是屬於Regional的特徵表達方法(Focus on regional information),對於一個給定的圖像窗口,Harr對該窗口的表達方式是用該窗口中的不同局域部分中的灰度差來表示的。如下圖所示(a)表示一個窗口I,(b)表示Harr的一個模式,(c)表示在窗口I中計算(b)模式的Harr特徵值時,只有白色和黑色部分被考慮了(也就是Focus on regional information)。

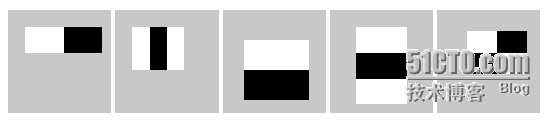

Harr特徵常用的模式有:

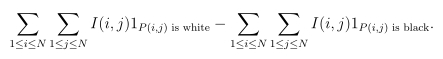

Harr特徵值的計算公式爲(窗口大小N*N):

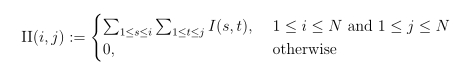

對於給定的一個窗口和一個Harr特徵模式,如果每一個特徵值的計算過程中都對一個模式中的黑色部分和白色部分進行和的計算的話,那麼效率就太低了,這裏積分圖技術就大顯身手了。對於給定的一個N*N的窗口I,其積分圖計算公式如下:

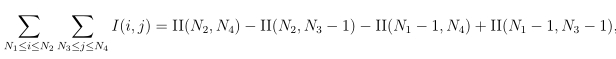

於是對一個窗口圖像中的一個方形內的像素求和計算方式如下:

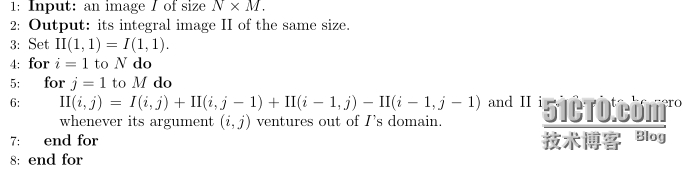

計算積分圖的算法描述如下:

2 實現

2.1基本數據結構

爲了方便算法的實現,這裏設計了一個ImgData數據結構,該結構將注意力集中在了圖像的數據部分,也許是不太完善的,但目前能夠滿足我的需求,你也是很容易對它做一改動去滿足你的需求,比如基於ImgData設計一個三通道的圖像數據結構。

#ifndef _IMGDATA_H_

#define_IMGDATA_H_

#include<iostream>

#include<cassert>

/**

*@ ImgDatais a template class that is used for operating only on image data

* and simply focus on one channel right now,but this calss could be used for

* constructing the 3 or more channels operationtemplate class

*

*@parammdata pointer pointing to image data

*@parammrows number of rows for image data

*@parammcols number of cols for image data

*/

template<typenameT> classImgData {

private:

T** mdata;

unsignedintmrows;

unsignedintmcols;

public:

/**

*@brief constructor function with defaultinitialization 0

*

*@param rows number of rows for image data

*@param cols number of cols for image data

*@val initial value for image data, default valueis 0

*/

ImgData(unsignedintrows,unsignedintcols,Tval=0) {

assert(rows > 0 && cols > 0);

mrows= rows;

mcols= cols;

mdata= newT*[mrows];

for(unsignedinti=0; i<mrows; i++) {

mdata[i]= newT[mcols];

}

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]= val;

}

}

}

/**

*@brief copy constructor

*/

ImgData(constImgData<T>&data){

mrows= data.rows();

mcols= data.cols();

mdata= newT*[mrows];

for(unsignedinti=0; i<mrows; i++) {

mdata[i]= newT[mcols];

}

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]= data[i][j];

}

}

}

/**

*@breif destructor

*/

~ImgData(){

for(unsignedinti=0; i<mrows; i++) {

delete[]mdata[i];

}

delete[]mdata;

}

/**

*@brief overload assignment operator

*/

ImgData<T>&operator=(constImgData<T>& data) {

if(mrows!= data.rows() || mcols != data.rows()){

for(unsignedinti=0; i<mrows; i++) {

delete[]mdata[i];

}

delete[]mdata;

}

mrows= data.rows();

mcols= data.cols();

mdata= newT*[mrows];

for(unsignedinti=0; i<mrows; i++) {

mdata[i]= newT[mcols];

}

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]= data[i][j];

}

}

return*this;

}

/**

*@brief set image data's value

*

*@param data a double layer pointer pointingto data going to be set to image data

*/

voidsetData(constT**data=NULL) {

assert(data != NULL);

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]= data[i][j];

}

}

}

/**

*@brief overload [] operator for likedata[][]operator use

*/

T* operator[](unsignedintn)const{

assert(n < mrows);

returnmdata[n];

}

/**

*@brief overload -= operator for all imagedata minus val individually

*/

voidoperator-=(Tval){

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]-= val;

}

}

}

/**

*@brief overload += operator for all imagedata plus val individually

*/

voidoperator+=(Tval){

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]+= val;

}

}

}

/**

*@brief overload *= operator for all imagedata multiple val individually

*/

voidoperator*=(Tval){

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]*= val;

}

}

}

/**

*@brief overload /= operator for all image datadevide val individually

*/

voidoperator/=(Tval){

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

mdata[i][j]/= val;

}

}

}

/**

*@brief ImageData .* ImageData

*

*@return a const ImageData

*/

constImgData<T>cwiseProduct(constImgData<T>& imgData) const{

assert(mrows == imgData.rows() && mcols ==imgData.cols());

ImgData<T>temp(imgData.rows(), imgData.cols());

T** tempData = temp.data();

T** data = imgData.data();

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

tempData[i][j]= mdata[i][j] * data[i][j];

}

}

returntemp;

}

/**

*@brief compute sum of image data

*

*@return a long double type sum of image data

*/

longdoublesum() const {

longdoubleimageSum = 0;

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

imageSum+= mdata[i][j];

}

}

returnimageSum;

}

/**

*@brief get pointer of image data for changingdata's value use

*/

T** data() const {

returnmdata;

}

/**

*@brief get number rows for image data

*/

unsignedintrows() const {

returnmrows;

}

/**

*@brief get number cols for image data

*/

unsignedintcols() const {

returnmcols;

}

/**

*@brief print image data for validation use

*/

voidprint() const {

for(unsignedinti=0; i<mrows; i++) {

for(unsignedintj=0; j<mcols; j++) {

std::cout<< mdata[i][j] << " ";

}

std::cout<< std::endl;

}

}

};

#endif2.2 積分圖相關實現

這裏提供了一個計算積分圖的實現,以及提供了一個計算積分圖內的一個矩形區域的和的方法。

#ifndef _INTEGRALIMG_H_

#define_INTEGRALIMG_H_

#include<cassert>

#include"cvImgData.h"

/**

* @briefintegral image in linear time

*

* @paramimage original image

* @returnintegralImage integral image

*/

//compute an integral image in linear time

template<typenameT>

void buildIntegralImage(

constImgData<T>&image

, ImgData<T>&integralImage

){

assert(image.rows() == integralImage.rows());

assert(image.cols() == image.cols());

intnRows = image.rows();

intnCols = image.cols();

//O(n)complexity: however no parallel should be used

T** imgData = image.data();

T** integralImgData = integralImage.data();

for(inti = 0; i < nRows; i++)

for(intj = 0; j < nCols; j++){

integralImgData[i][j]= imgData[i][j];

integralImgData[i][j]+= i > 0 ? integralImgData[i-1][j] : 0;

integralImgData[i][j]+= j > 0 ? integralImgData[i][j-1] : 0;

integralImgData[i][j]-= std::min(i,j) > 0 ? integralImgData[i-1][j-1] : 0;

}

}

/**

* @brief sumup the pixels in a sub-rectangle of the image

*

* @paramintegralImage its integral image

* @param uirow index of this sub-rectangle's up left corner

* @param ujcolumn index of this sub-rectangle's up left corner

* @param irnumber of rows of this sub-rectangle

* @param jrnumber of columns of this sub-rectangle

* @returnthe sum

*/

template<typenameT>

longdoublesumImagePart(

constImgData<T>&integralImage

, intui

, intuj

, intir

, intjr

){

//rectangle'sother pair of coordinates

intdi = ui + ir- 1;

intdj = uj + jr- 1;

//standardoperation

T** integralData = integralImage.data();

longdoublesum = integralData[di][dj];

sum-= ui > 0 ? integralData[ui-1][dj]: 0;

sum-= uj > 0 ? integralData[di][uj-1]: 0;

sum+= std::min(ui, uj)> 0 ? integralData[ui-1][uj-1]: 0;

returnsum;

}

#endif2.3 Harr特徵實現

對於Harr特徵的計算,這裏實現了Viola-Jones算法中的5種基本模式。

#ifndef _HARR_H_

#define_HARR_H_

#include<iostream>

#include<fstream>

#include<vector>

#include<stack>

#include<string>

#include<cassert>

#include"cvImgData.h"

#include"cvIntegralImg.h"

usingnamespacestd;

#defineFOLLOW_VJtrue

#defineSTD_NORM_CONST1e4

#defineFLAT_THRESHOLD1

/**

* @briefcompute Haar-like features with integral image,

* mostly for training samples use.

*

* @paramintegralImage its integral image

* @paramfeatures all the features from this image

* @paramenforceShape the original Viola-Jones's proposal or more extensive definition

* of rectangle features (requires significantlymore memory if disabled)

*/

inlineintsumOfElement(intarray[],intk){

intret = 0;

for(inti=0; i<k; i++) {

ret+= array[i];

}

returnret;

}

voidcomputeHaarLikeFeaturesOnlyUsingIntegralImage(

ImgData<double>&image

, vector<double>&features

, boolenforceShape= true

){

if(features.size()> 0) {

features.resize(0);

}

//integralimage

intnRows = image.rows();

intnCols = image.cols();

//firstnormalize the example, this might prove key to learning success

//meanvalue

floatmean = static_cast<float>(image.sum()/(float)(nRows*nCols));

image-= mean;

floatstd = static_cast<float>(sqrt(image.cwiseProduct(image).sum()/(float)(nRows*nCols)));

if(std< FLAT_THRESHOLD || _isnan(std)){

std= FLAT_THRESHOLD;

cout<< "Find one completely flat example. Don't worry.It'll be treated as an outlier presumably."<< endl;

}

//fullyuse the float range

image*= STD_NORM_CONST;

image/= std;

ImgData<double>integralImage(nRows, nCols);

buildIntegralImage(image,integralImage);

//twohorizontal patterns

for(intportions = 2; portions < 4; portions++){

//upleft coordinate

for(inti = 0; i < nRows; i++)

for(intj = 0; j < nCols; j++){

//howlong can l1 be

intl1MIN = portions == 2 ? 0 : 1;

intl1MAX = portions == 2 ? 0 : nCols - j;

//height

for(intir = 1; ir <= nRows - i; ir++)

for(intl1 = l1MIN; l1 <= l1MAX; l1++)

for(intl2 = 1; l2 <= nCols-j-l1; l2++)

for(intl3 = 1; l3 <= nCols-j-l1-l2; l3++){

//makethese lengths more intelligible

intlengths[3];

lengths[0]= l1;

lengths[1]= l2;

lengths[2]= l3;

//nowgo for the feature

doublefeature = 0;

if(portions== 2){

if(enforceShape&& l2 != l3)

continue;

feature+= (float)sumImagePart(integralImage, i, j,ir, l2);

feature-= (float)sumImagePart(integralImage, i, j+l2,ir, l3);

}else{

if(enforceShape&& FOLLOW_VJ && !(l1 == l3 && l1== l2))

continue;

if(enforceShape&& !FOLLOW_VJ && !(l1 == l3 && l1>= l2))

continue;

for(intk = 0; k < 3; k++){

intfactor = static_cast<int>(pow(-1.,k));

intadvance = k == 0 ? 0 : sumOfElement(lengths, k);

feature+= factor*(float)sumImagePart(integralImage, i,j+advance, ir, lengths[k]);

}

}

//store

features.push_back(feature);

}

}

}

//twovertical patterns: if you go my way, only one vertical pattern

for(intportions = 2; portions < 4; portions++){

//upleft coordinate

for(inti = 0; i < nRows; i++)

for(intj = 0; j < nCols; j++){

intl1MIN = portions == 2 ? 0 : 1;

intl1MAX = portions == 2 ? 0 : nRows - i;

//width

for(intjr = 1; jr <= nCols-j; jr++)

for(intl1 = l1MIN; l1 <= l1MAX; l1++)

for(intl2 = 1; l2 <= nRows-i-l1; l2++)

for(intl3 = 1; l3 <= nRows-i-l1-l2; l3++){

//makethese lengths more intelligible

intlengths[3];

lengths[0]= l1;

lengths[1]= l2;

lengths[2]= l3;

//nowgo for the feature

doublefeature = 0;

if(portions== 2){

if(enforceShape&& !(l2 == l3))

continue;

feature+= (float)sumImagePart(integralImage, i, j,l2, jr);

feature-= (float)sumImagePart(integralImage, i+l2, j,l3, jr);

}else{

if(enforceShape&& FOLLOW_VJ && !(l1 == l2 && l1== l3))

continue;

//if(enforceShape&& FOLLOW_VJ)

if(enforceShape&& !FOLLOW_VJ)

continue;

for(intk = 0; k < 3; k++){

intfactor = static_cast<int>(pow(-1.,k));

intadvance = k == 0 ? 0 : sumOfElement(lengths, k);

feature+= factor*(float)sumImagePart(integralImage,i+advance, j, lengths[k], jr);

}

}

//store

features.push_back(feature);

}

}

}

//amixed pattern

for(inti = 0; i < nRows; i++)

for(intj = 0; j < nCols; j++)

for(inti_l1 = 1; i_l1 < nRows - i; i_l1++)

for(inti_l2 = 1; i_l2 <= nRows - i - i_l1; i_l2++)

for(intj_l1 = 1; j_l1 < nCols - j; j_l1++)

for(intj_l2 = 1; j_l2 <= nCols - j - j_l1; j_l2++){

if(enforceShape&& !(i_l1 == i_l2 && j_l1 == j_l2))

continue;

doublefeature = 0;

feature+= (float)sumImagePart(integralImage, i, j,i_l1, j_l1);

feature-= (float)sumImagePart(integralImage, i, j +j_l1, i_l1, j_l2);

feature-= (float)sumImagePart(integralImage, i +i_l1, j, i_l2, j_l1);

feature+= (float)sumImagePart(integralImage, i + i_l1,j + j_l1, i_l2, j_l2);

//record

features.push_back(feature);

}

}reference:

Yi-Qing Wang, An Analysis of the Viola-Jones Face Detection Algorithm, IPOL.