本文測試文本:

tom 20 8000

nancy 22 8000

ketty 22 9000

stone 19 10000

green 19 11000

white 39 29000

socrates 30 40000 MapReduce中,根據key進行分區、排序、分組

MapReduce會按照基本類型對應的key進行排序,如int類型的IntWritable,long類型的LongWritable,Text類型,默認升序排序

爲什麼要自定義排序規則?現有需求,需要自定義key類型,並自定義key的排序規則,如按照人的salary降序排序,若相同,則再按age升序排序

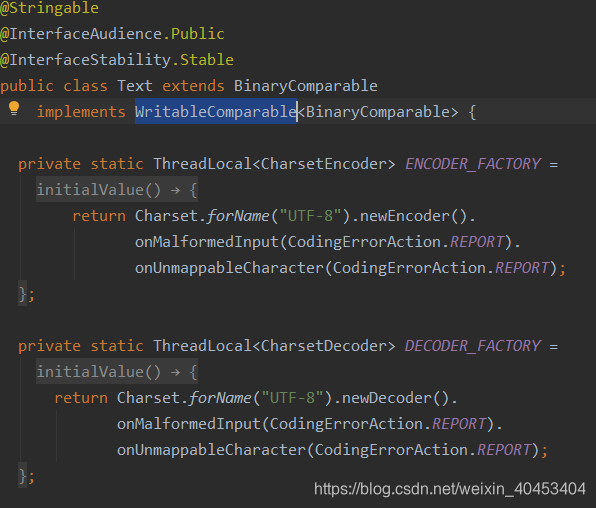

以Text類型爲例:

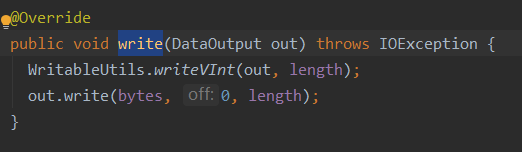

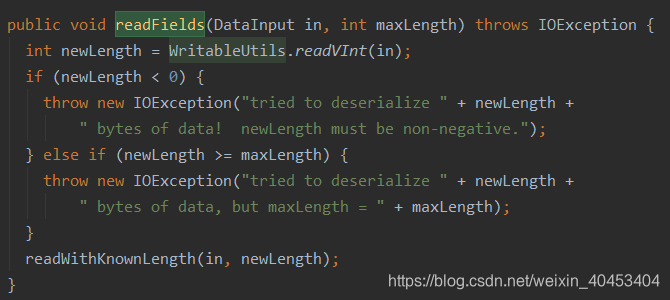

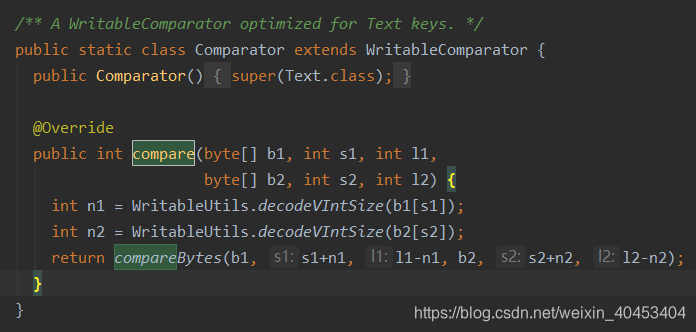

Text類實現了WritableComparable接口,並且有write()、readFields()和compare()方法readFields()方法:用來反序列化操作write()方法:用來序列化操作

所以要想自定義類型用來排序需要有以上的方法

自定義類代碼:

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class Person implements WritableComparable<Person> {

private String name;

private int age;

private int salary;

public Person() {

}

public Person(String name, int age, int salary) {

//super();

this.name = name;

this.age = age;

this.salary = salary;

}

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

public int getSalary() {

return salary;

}

public void setSalary(int salary) {

this.salary = salary;

}

@Override

public String toString() {

return this.salary + " " + this.age + " " + this.name;

}

//先比較salary,高的排序在前;若相同,age小的在前

public int compareTo(Person o) {

int compareResult1= this.salary - o.salary;

if(compareResult1 != 0) {

return -compareResult1;

} else {

return this.age - o.age;

}

}

//序列化,將NewKey轉化成使用流傳送的二進制

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeUTF(name);

dataOutput.writeInt(age);

dataOutput.writeInt(salary);

}

//使用in讀字段的順序,要與write方法中寫的順序保持一致

public void readFields(DataInput dataInput) throws IOException {

//read string

this.name = dataInput.readUTF();

this.age = dataInput.readInt();

this.salary = dataInput.readInt();

}

}MapReuduce程序:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.net.URI;

public class SecondarySort {

public static void main(String[] args) throws Exception {

System.setProperty("HADOOP_USER_NAME","hadoop2.7");

Configuration configuration = new Configuration();

//設置本地運行的mapreduce程序 jar包

configuration.set("mapreduce.job.jar","C:\\Users\\tanglei1\\IdeaProjects\\Hadooptang\\target\\com.kaikeba.hadoop-1.0-SNAPSHOT.jar");

Job job = Job.getInstance(configuration, SecondarySort.class.getSimpleName());

FileSystem fileSystem = FileSystem.get(URI.create(args[1]), configuration);

if (fileSystem.exists(new Path(args[1]))) {

fileSystem.delete(new Path(args[1]), true);

}

FileInputFormat.setInputPaths(job, new Path(args[0]));

job.setMapperClass(MyMap.class);

job.setMapOutputKeyClass(Person.class);

job.setMapOutputValueClass(NullWritable.class);

//設置reduce的個數

job.setNumReduceTasks(1);

job.setReducerClass(MyReduce.class);

job.setOutputKeyClass(Person.class);

job.setOutputValueClass(NullWritable.class);

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.waitForCompletion(true);

}

public static class MyMap extends

Mapper<LongWritable, Text, Person, NullWritable> {

//LongWritable:輸入參數鍵類型,Text:輸入參數值類型

//Persion:輸出參數鍵類型,NullWritable:輸出參數值類型

@Override

//map的輸出值是鍵值對<K,V>,NullWritable說關心V的值

protected void map(LongWritable key, Text value,

Context context)

throws IOException, InterruptedException {

//LongWritable key:輸入參數鍵值對的鍵,Text value:輸入參數鍵值對的值

//獲得一行數據,輸入參數的鍵(距首行的位置),Hadoop讀取數據的時候逐行讀取文本

//fields:代表着文本一行的的數據

String[] fields = value.toString().split(" ");

// 本列中文本一行數據:nancy 22 8000

String name = fields[0];

//字符串轉換成int

int age = Integer.parseInt(fields[1]);

int salary = Integer.parseInt(fields[2]);

//在自定義類中進行比較

Person person = new Person(name, age, salary);

context.write(person, NullWritable.get());

}

}

public static class MyReduce extends

Reducer<Person, NullWritable, Person, NullWritable> {

@Override

protected void reduce(Person key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

context.write(key, NullWritable.get());

}

}

}運行結果:

40000 30 socrates

29000 39 white

11000 19 green

10000 19 stone

9000 22 ketty

8000 20 tom

8000 22 nancy