部署環境:

CentOS 6.4 64bit

Hadoop2.7.1、Mysql5.5、Hive1.2.1、Scala2.11.7、Spark1.5.0

jdk1.7.0_79

主機IP:

master(namenode):10.10.4.115

slave1(datanode):10.10.4.116

slave2(datanode):10.10.4.117

一、環境準備及Hadoop部署:

(1)環境準備(host配置必須一致,否則可能導致錯誤):

[root@master ~]#vi /etc/hosts

127.0.0.1 localhost

10.10.4.115 master.hadoop

10.10.4.116 slave1.hadoop

10.10.4.117 slave2.hadoop

(2)設置主機名:

[root@master ~]#vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=master.hadoop

(3)使其hostname即時生效:

可重啓系統;

或者使用命令:

[root@master ~]#hostname master.hadoop

或者

[root@master ~]#echo master.hadoop > /proc/sys/kernel/hostname

(4)關閉防火牆服務:

[root@master ~]#service iptables stop

避免重啓系統後iptables啓動:

[root@master ~]#chkconfig iptables off

確認已關閉

[root@master ~]#chkconfig --list|grep iptables

iptables 0:關閉 1:關閉 2:關閉 3:關閉 4:關閉 5:關閉 6:關閉

chkconfig關閉iptables並不能永久關閉iptables,在重啓系統後,level3下會自動啓動iptables,這時候我們可以將iptables規則清空後保存,間接達到永久關閉的需求:

echo "" > /etc/sysconfig/iptables

(5)關閉selinux

[root@master ~]#vi /etc/sysconfig/selinux

修改SELINUX=disabled

(6)卸載默認安裝的openjdk(hadoop官方建議使用oracle版本jdk):

檢查是否安裝):

[root@master ~]#rpm -qa|grep openjdk

卸載安裝的openjdk:

[root@master ~]#rpm -e openjdk

(7)下載並安裝oracle jdk1.7.0_79:

[root@master ~]#rpm -ivh jdk-7u79-linux-x64.rpm

(8)設置環境變量:

[root@master ~]#vi /etc/profile

##############JAVA#############################

export JAVA_HOME=/usr/java/jdk1.7.0_79

export CLASSPATH=.:$JAVA_HOME/jre/lib:$JAVA_HOME/lib/tools.jar

export JRE_HOME=$JAVA_HOME/jre

export PATH=/usr/local/mysql/bin:$JAVA_HOME/bin:$PATH

################END############################

(9)使其生效:

[root@master ~]#source /etc/profile

(10)測試:

[root@master ~]#echo $JAVA_HOME

/usr/java/jdk1.7.0_79

(11)創建hadoop用戶及羣組:

[root@master ~]#useradd hadoop -p mangocity

(12)配置master免密碼登錄slave:

啓用免密碼登錄ssh配置:

[root@master ~]#vi /etc/ssh/sshd_config

RSAAuthentication yes # 啓用 RSA 認證

PubkeyAuthentication yes # 啓用公鑰私鑰配對認證方式

AuthorizedKeysFile .ssh/authorized_keys # 公鑰文件路徑(和上面生成的文件同)

(13)設置完之後記得重啓SSH服務,才能使剛纔設置有效:

[root@master ~]#service sshd restart

#######以上設置slave服務器設置類同master##########

(14)在master上切換到hadoop用戶,生成公鑰:

[root@master ~]#su - hadoop

(15)生成公鑰:

[hadoop@master ~]$ssh-keygen –t rsa –P ''

[hadoop@master ~]$more /home/hadoop/.ssh/id_rsa.pub

ssh-rsa AAAB3**************************************************7RbuPw== [email protected]

(16)把id_rsa.pub內容追加到授權的key:

[hadoop@master ~]$cat /home/hadoop/.ssh/id_rsa.pub >> /home/hadoop/.ssh/authorized_keys

(17)驗證本地免密碼登錄:

驗證之前確認/home/hadoop/.ssh/authorized_keys的權限爲600,即只有hadoop用戶擁有rw權限:

[hadoop@master ~]$ ll ./.ssh/authorized_keys

-rw-------. 1 hadoop hadoop 402 11月 18 01:53 ./.ssh/authorized_keys

如權限不對,則會導致免密碼登錄失敗;如權限非600,則需要修改其權限爲600:

[hadoop@master ~]$chmod 600 ~/.ssh/authorized_keys

(18)測試驗證:

[hadoop@master ~]$ssh 127.0.0.1

The authenticity of host '127.0.0.1 (127.0.0.1)' can't be established.

RSA key fingerprint is 31:45:00:ff:a7:80:23:f2:2a:86:7f:13:0d:bf:f0:d6.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '127.0.0.1' (RSA) to the list of known hosts.

[email protected]'s password:(第一次登錄需要輸入密碼)

[hadoop@master ~]$exit

[hadoop@master ~]$ssh 127.0.0.1

Last login: Mon Nov 23 09:52:02 2015 from localhost(第二次登錄不需要輸入密碼,說明本地登錄驗證成功)

[hadoop@master ~]$

(19)設置slave服務器ssh免密碼登錄:

此時slave服務器的hadoop的家目錄下是沒有.ssh目錄和authorized_keys的,需要手動建立:

[hadoop@slave1 ~]#su -hadoop

[hadoop@slave1 ~]$ls -a

. .. .bash_history .bash_logout .bash_profile .bashrc .gnome2 .viminfo

(20)手動建立目錄、修改權限:

[hadoop@slave1 ~]$mkdir ./.ssh

[hadoop@slave1 ~]$chmod 700 ./.ssh

(21)手動創建文件、修改文件權限:

[hadoop@slave1 ~]$touch ./.ssh/authorized_keys

[hadoop@slave1 ~]$chmod 600 ./.ssh/authorized_keys

[hadoop@slave1 ~]$ls -a

. .. .bash_history .bash_logout .bash_profile .bashrc .gnome2 .ssh .viminfo

(22)將master服務器上hadoop用戶建立的公鑰文件id_rsa.pub複製到slave服務器hadoop用戶目錄:

[hadoop@slave1 ~]$scp [email protected]:/home/hadoop/id_rsa.pub /home/hadoop/

(23)將master服務器hadoop用戶的公鑰id_rsa.pub內容追加到授權的authorized_keys文件中:

[hadoop@slave1 ~]$cat /home/hadoop/.ssh/id_rsa.pub >> /home/hadoop/.ssh/authorized_keys

以上步驟在slave2服務器重複操作一遍,更改/etc/ssh/sshd_config配置並重啓sshd服務。

(24)測試驗證master免密碼登錄slave服務器配置:

[hadoop@master ~]$ssh slave1.hadoop

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '127.0.0.1' (RSA) to the list of known hosts.

[email protected]'s password:(第一次登錄需要輸入密碼)

[hadoop@slave1 ~]$exit

logout

Connection to slave1.hadoop closed.

[hadoop@master ~]$ ssh slave1.hadoop

Last login: Mon Nov 23 19:25:05 2015 from master.hadoop(第二次登錄不需要輸入密碼,且當前用戶已切換到slave1的hadoop,說明無密碼登錄驗證成功)

[hadoop@slave1 ~]$

驗證master免密碼登錄slave2服務器的過程和slave1相同。

部署hadoop2.7.1到master:

(1)下載並解壓源碼包:

[root@master ~]#tar -xvf hadoop-2.7.1.tar.gz

[root@master ~]#cp -Rv ./hadoop-2.7.1 /usr/local/hadoop/

(2)/usr/local/hadoop建立一些需要用到的目錄:

[root@master ~]#mkdir -p /usr/local/hadoop/tmp /usr/local/hadoop/dfs/data /usr/local/hadoop/dfs/name

[root@master ~]#chown -R hadoop:hadoop /usr/local/hadoop/

(3)設置環境變量(slave服務器與master一致):

vi /etc/profile

##############Hadoop###########################

export HADOOP_HOME=/usr/local/hadoop

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

export PATH=/usr/local/mysql/bin:$HADOOP_HOME/bin:$PATH

################END############################

(4)使其生效:

source /etc/profile生效

(5)切換到hadoop用戶,修改hadoop相關配置,相關文件存放在$HADOOP_HOME/etc/hadoop/下,主要配置core-site.xml、hdfs-site.xml、mapred-site.xml、yarn-site.xml這幾個site文件:

[root@master ~]#su - hadoop

[hadoop@master ~]$ cd /usr/local/hadoop/etc/hadoop/[root@master ~]#su - hadoop

[hadoop@master ~]$ cd /usr/local/hadoop/etc/hadoop/

(6)修改env環境變量文件,將hadoop-env.sh,mapred-env.sh,yarn-env.sh末尾都添加上JAVA_HOME環境變量:

[hadoop@master hadoop]$vi hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.7.0_79 ###在文件末尾添加上這一行,另外2個文件相同方式修改

修改site文件,相關配置如下:

(7)core-site.xml:

[hadoop@master hadoop]$more core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master.hadoop:9000</value> #因後期需要整合hive,這裏要用hostname

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop/tmp</value>

<description>Abasefor other temporary directories.</description>

</property>

</configuration>

(8)mapred-site.xml:

[hadoop@master hadoop]$more mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

(9)hdfs-site.xml:

[hadoop@master hadoop]$more hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>10.10.4.115:9001</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

(10)yarn-site.xml

[hadoop@master hadoop]$more yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>10.10.4.115</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>10.10.4.115:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>10.10.4.115:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>10.10.4.115:8035</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>10.10.4.115:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>10.10.4.115:8088</value>

</property>

(11)添加datanode節點:

[root@master ~]#vi slaves

10.10.4.116 #可以填IP地址也可以填hostname

10.10.4.116

(12)部署datanode節點:

複製hadoop目錄至slave1和slave2節點,並修改目錄權限:

[root@master ~]#scp -r /usr/local/hadoop/ [email protected]:/usr/local/

[hadoop@slave1 ~]$chown -R hadoop:hadoop /usr/local/hadoop

[root@master ~]#scp -r /usr/local/hadoop/ [email protected]:/usr/local/

[hadoop@slave2 ~]$chown -R hadoop:hadoop /usr/local/hadoop

(13)格式化namenode:

[root@master ~]#su - hadoop

[hadoop@master ~]$cd /usr/local/hadoop

[hadoop@master hadoop]$./bin/hdfs namenode -format

當出現“successfully formatted”提示,說明格式化成功。

(14)啓動hadoop:

[hadoop@master hadoop]$./sbin/start-all.sh

(15)檢查master(namenode)進程狀態:

[hadoop@master hadoop]$ jps

2697 Jps

7820 SecondaryNameNode

7985 ResourceManager

7614 NameNode

出現以上結果說明namenode節點服務正常運行

(16)檢查slave(datanode)進程狀態:

[hadoop@slave1 ~]$ jps

18342 DataNode

30450 Jps

18458 NodeManager

(17)顯示以上結果說明datanode節點服務正常運行

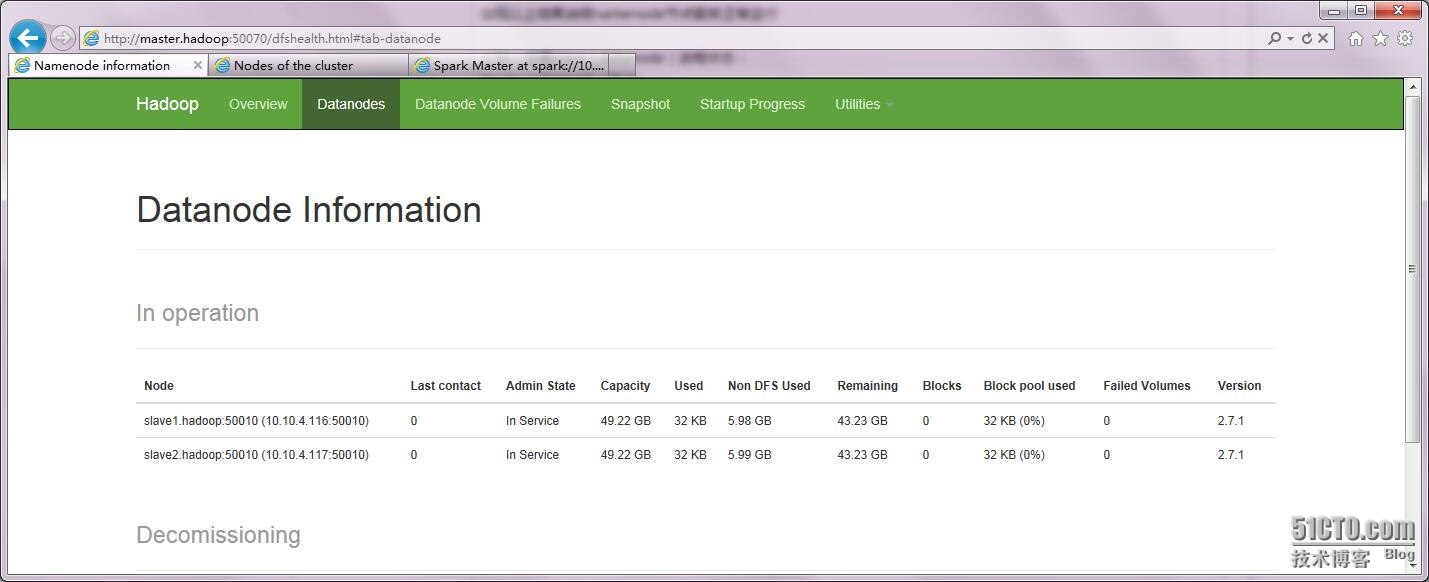

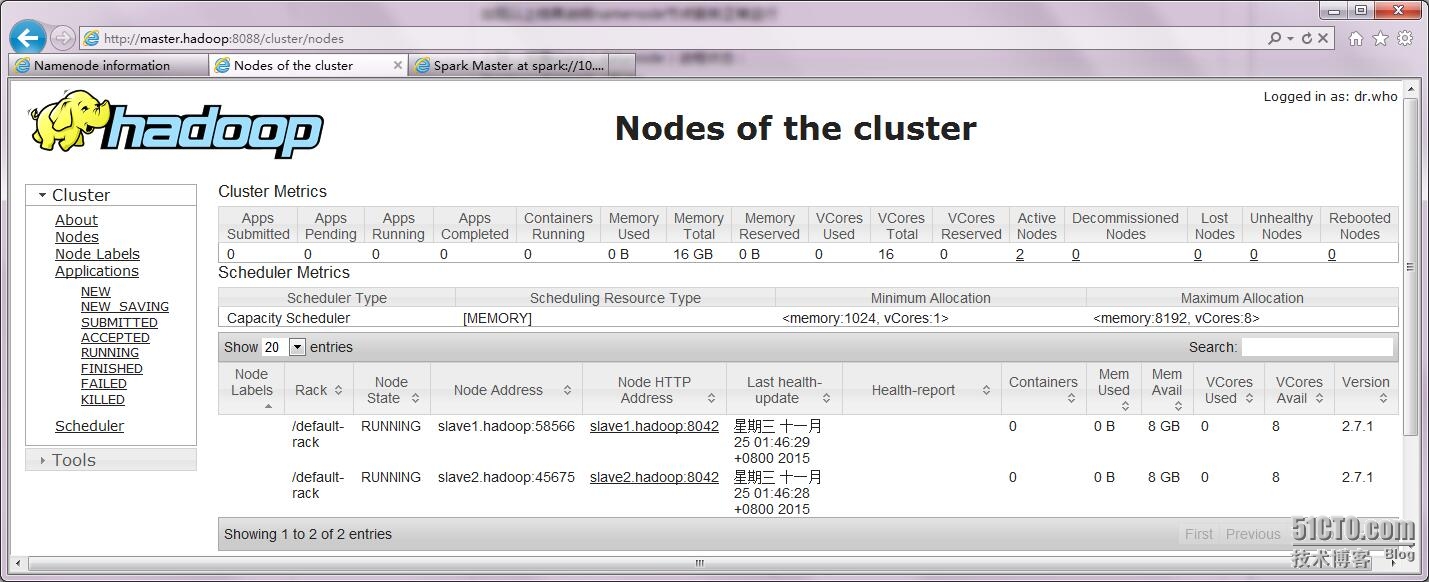

還可以通過網頁監控集羣狀態等信息:

至此,hadoop集羣安裝完成,接下來是和Hive的整合。

二、Hive和Hadoop的整合(hive安裝在namenode節點上):

(1)安裝mysql 5.5(也可以用yum安裝,這裏使用源碼安裝):

安裝依賴包:

[root@master ~]#yum -y install gcc gcc-c++ ncurses ncurses-devel cmake

(2)下載相應源碼包:

[root@master ~]#wget http://cdn.mysql.com/Downloads/MySQL-5.5/mysql-5.5.46.tar.gz

(3)添加mysql用戶:

[root@master ~]#useradd -M -s /sbin/nologin mysql

(4)預編譯:

[root@master ~]#tar -xzvf mysql-5.5.46.tar.gz

[root@master ~]#mkdir -p /data/mysql

[root@master ~]#cd mysql-5.5.46

[root@master mysql-5.5.46]#cmake . -DCMAKE_INSTALL_PREFIX=/usr/local/mysql \ ###mysql5.5版本之後,configura改成cmake

-DMYSQL_DATADIR=/data/mysql \

-DSYSCONFDIR=/etc \

-DWITH_INNOBASE_STORAGE_ENGINE=1 \

-DWITH_PARTITION_STORAGE_ENGINE=1 \

-DWITH_FEDERATED_STORAGE_ENGINE=1 \

-DWITH_BLACKHOLE_STORAGE_ENGINE=1 \

-DWITH_MYISAM_STORAGE_ENGINE=1 \

-DENABLED_LOCAL_INFILE=1 \

-DENABLE_DTRACE=0 \

-DDEFAULT_CHARSET=utf8mb4 \

-DDEFAULT_COLLATION=utf8mb4_general_ci \

-DWITH_EMBEDDED_SERVER=1

(5)編譯安裝:

[root@master mysql-5.5.46]#make

[root@master mysql-5.5.46]#make install

(6)啓動腳本,設置開機自啓動:

[root@master mysql-5.5.46]#cp /usr/local/mysql/support-files/mysql.server /etc/init.d/mysqld

[root@master mysql-5.5.46]#chmod +x /etc/init.d/mysqld

[root@master mysql-5.5.46]#chkconfig --add mysqld

[root@master mysql-5.5.46]#chkconfig mysqld on

(7)初始化數據庫:

[root@master mysql-5.5.46]#/usr/local/mysql/bin/mysqld --initialize-insecure --user=mysql --basedir=/usr/local/mysql --datadir=/data/mysql

(8)設置密碼:

[root@master mysql-5.5.46]#mysql -uroot -p #第一次執行時,會要求輸入root賬戶密碼

(9)建立hive數據庫:

mysql>create database hive;

(10)建立hive賬戶並授權訪問mysql權限:

mysql>grant all privileges on *.* to 'hive'@'%' identified by '123456' with grant option; #設置用戶名密碼及訪問權限授權

mysql>flush privileges;

mysql>alter database hivemeta character set latin1; #更改字符集類型

mysql>flush privileges;

mysql>exit

(11)測試hive賬戶:

[root@master mysql-5.5.46]#mysql -uhive -pmangocity

mysql>

測試登錄成功

安裝Hive:

(1)設置全局環境變量(行首添加):

[root@master ~]#vi /etc/profile

#############Hive##############################

export HIVE_HOME=/usr/local/hive-1.2.1

export HIVE_CONF_DIR=$HIVE_HOME/conf

export HIVE_LIB=$HIVE_HOME/lib

export CLASSPATH=$CLASSPATH:$HIVE_LIB

export PATH=$HIVE_HOME/bin/:$PATH

###################END#########################

(2)使其生效:

[root@master ~]#source /etc/profile

(3)下載安裝Hive:

[root@master ~]#tar -xzvf apache-hive-1.2.1-bin.tar.gz

[root@master ~#mv ./apache-hive-1.2.1 /usr/local/hive-1.2.1

(4)改變hive目錄屬組:

[root@master ~]#chown -R hadoop:hadoop /usr/local/hive-1.2.1

su - hadoop

[hadoop@master ~]$cd /usr/local/hive-1.2.1/

(5)將下載好的mysql-connector-java-5.1.6-bin.jar複製到hive 的lib下面:

[hadoop@master hive-1.2.1]$cp /home/hadoop/mysql-connector-java-5.1.37-bin.jar /usr/local/hive-1.2.1/lib/

(6)將$HIVE_HOME/lib下jline-2.12.jar複製到hadoop對應的目錄中,替換掉低版本的jline-0.9.94.jar,看通過find查找出具體目錄:

[hadoop@master hive-1.2.1]$find /usr/local/hadoop -name jline*.jar

[hadoop@master hive-1.2.1]$mv /usr/local/hadoop/share/hadoop/yarn/lib/jline-0.9.4.jar /usr/local/hadoop/share/hadoop/yarn/lib/jline-0.9.4.jar.bak #備份舊版本jar包

[hadoop@master hive-1.2.1]$cp /usr/local/hive-1.2.1/lib/jline-2.12.jar /usr/local/hadoop/share/hadoop/yarn/lib/

(8)hive環境變量設置:

編輯$HIVE_HOME/bin/hive-config.sh,頁尾添加:

[hadoop@master hive-1.2.1]$vi /usr/local/hive-1.2.1/bin/hive-config.sh

export JAVA_HOME=/usr/java/jdk1.7.0_79

export HADOOP_HOME=/usr/local/hadoop

export HIVE_HOME=/usr/local/hive-1.2.1

(9)hive環境變量設置:

編輯$HADOOP_HOME/etc/hadoop/hadoop-env.sh,頁尾添加:

[hadoop@master hive-1.2.1]$vi /usr/local/hadoop/etc/hadoop/hadoop-env.sh

export HIVE_HOME=/usr/local/hive-1.2.1

export HIVE_CONF_DIR=/usr/local/hive-1.2.1/conf

(10)設置hive與mysql連接等參數等文件的配置:

[hadoop@master hive-1.2.1]$vi /usr/local/hive-1.2.1/conf/hive-site.xml

<configuration>

<property>

<name>hive.metastore.local</name> #遠程mysql還是本地mysql,這裏是本地

<value>ture</value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://127.0.0.1:3306/hive?createDatabaseIfNotExist=true</value> #JDBC相關參數

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

<description>username to use against metastore database</description> #連接mysql所用賬戶

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description> #連接mysql所用賬戶密碼

</property>

</configuration>

(11)啓動Hive:

[hadoop@master hive-1.2.1]$./bin/hive --service metastore & #啓動metastore,置於後臺運行

[hadoop@master hive-1.2.1]$./bin/hive --service hiveserver & #啓動hiveserver,置於後臺運行

(12)測試Hive,執行jps,會查看到有一個Runjar進程在運行:

[hadoop@master hive-1.2.1]$jps

5640 NameNode

6013 ResourceManager

5847 SecondaryNameNode

6490 RunJar

13978 Jps

(13)測試hive和hadoop及MySQL的整合:

[hadoop@master hive-1.2.1]$ ./bin/hive

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/local/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/spark-1.5.0/lib/spark-assembly-1.5.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/local/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/spark-1.5.0/lib/spark-assembly-1.5.0-hadoop2.6.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

15/11/24 10:12:24 WARN conf.HiveConf: HiveConf of name hive.metastore.local does not exist

Logging initialized using configuration in jar:file:/usr/local/hive-1.2.1/lib/hive-common-1.2.1.jar!/hive-log4j.properties

hive> show databases;

OK

default

Time taken: 1.878 seconds, Fetched: 1 row(s)

hive> show tables;

OK

Time taken: 2.025 seconds, Fetched: 1 row(s)

hive> create table test(name string, age int); #嘗試建立一張表

OK

Time taken: 2.952 seconds

hive> desc test;

OK

name string

age int

Time taken: 0.225 seconds, Fetched: 2 row(s)

hive> exit;

至此,hadoop集羣與hive的整合完成,接下來是和spark的整合。

三丶Spark和Hadoop的整合

安裝Scala:

(1)將壓縮包解壓至/usr/scala 目錄:

[root@master ~]#tar -zxvf .//scala-2.11.7.tgz

[root@master ~]#mv ./scala-2.11.7 /usr/local/scala-2.11.7

(2)目錄授權給hadoop用戶:

[root@master ~]#chown -R hadoop:hadoop /usr/local/scala-2.11.7

(3)設置環境變量:

[root@master ~]#vi /etc/profile

追加如下內容:

#############Scale#############################

export SCALA_HOME=/usr/local/scala-2.11.7

export PATH=$PATH:$SCALA_HOME/bin

####################END########################

(4)使其生效:

[root@master ~]#source /etc/profile

(5)測試Scala是否安裝配置成功:

[hadoop@master ~]$ scala -version

Scala code runner version 2.11.7 -- Copyright 2002-2013, LAMP/EPFL

安裝Spark

官網下載spark1.5.0:spark-1.5.0-bin-hadoop2.6.tgz

(1)將壓縮包解壓至/usr/local目錄:

[root@master ~]#tar -zxvf ./spark-1.5.0-bin-hadoop2.6.tgz

[root@master ~]#mv ./spark-1.5.0 /usr/local/spark-1.5.0

(2)目錄授權給hadoop用戶:

[root@master ~]#chown -R hadoop:hadoop /usr/local/spark-1.5.0

(3)設置環境變量:

[root@master ~]#vi /etc/profile

追加如下內容

############Spark##############################

export SPARK_HOME=/usr/local/spark-1.5.0

export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin

#################END###########################

(4)使之生效

[root@master ~]#source /etc/profile

(5)測試Spark是否安裝配置成功:

[root@master ~]#spark-shell --version

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 1.5.0

/_/

Type --help for more information.

(6)編輯/usr/local/hadoop/etc/hadoop/hadoop-env.sh,添加如下內容:

export SPARK_MASTER_IP=10.10.4.115

export SPARK_WORKER_MEMORY=1024m

(7)更改spark相關配置:

su - hadoop

cd /usr/local/spark.1.5.0

(8)複製模板並編輯spark-env.sh ,配置相關環境變量:

[hadoop@master spark-1.5.0]$mv ./conf/spark-env.sh.template ./conf/spark-env.sh

[hadoop@master spark-1.5.0]$vi ./conf/spark-env.sh

export HADOOP_CONF_DIR=/usr/local/hadoop/etc/hadoop

export SCALA_HOME=/usr/local/scala-2.11.7

export JAVA_HOME=/usr/java/jdk1.7.0_79

export SPARK_MASTER_IP=10.10.4.115

export SPARK_WORKER_MEMORY=1024m

(9)配置slave節點服務器:

[hadoop@master spark-1.5.0]$vi ./conf/slaves

# A Spark Worker will be started on each of the machines listed below.

slave1.hadoop

slave2.hadoop

(10)切換到root用戶,將scala和spark以及相關環境變量配置複製到datanode節點,即salve1和slave2服務器:

[root@master ~]#scp -r /usr/local/scala-2.11.7 [email protected]:/usr/local/scala-2.11.7

[root@master ~]#scp -r /usr/local/spark.1.5.0 [email protected]:/usr/local/spark.1.5.0

登錄到slave1服務器,將剛纔複製的兩個目錄授權給hadoop:

chown -R hadoop:hadoop /usr/local/scala-2.11.7 /usr/local/spark.1.5.0

配置slave1服務器/etc/profile,/usr/local/hadoop/etc/hadoop/hadoop-env.sh,使其和master服務器保持一致,並使其生效。

[root@master ~]#scp -r /usr/local/scala-2.11.7 [email protected]:/usr/local/scala-2.11.7

[root@master ~]#scp -r /usr/local/spark.1.5.0 [email protected]:/usr/local/spark.1.5.0

登錄到slave2服務器,將剛纔複製的兩個目錄授權給hadoop:

chown -R hadoop:hadoop /usr/local/scala-2.11.7 /usr/local/spark.1.5.0

配置slave2服務器/etc/profile,/usr/local/hadoop/etc/hadoop/hadoop-env.sh,使其和master服務器保持一致,並使其生效。

(11)啓動spark和測試:

[hadoop@master spark-1.5.0]$./sbin/start-all.sh

可以通過jps查看namenode節點,會有一個Master進程在運行:

[hadoop@master spark-1.5.0]$jps

5640 NameNode

6013 ResourceManager

5847 SecondaryNameNode

6897 Master

15164 Jps

6490 RunJar

過jps查看datanode節點,會有一個Worker進程在運行:

[hadoop@slave1 ~]$ jps

3982 Jps

31810 DataNode

32136 Worker

31928 NodeManager

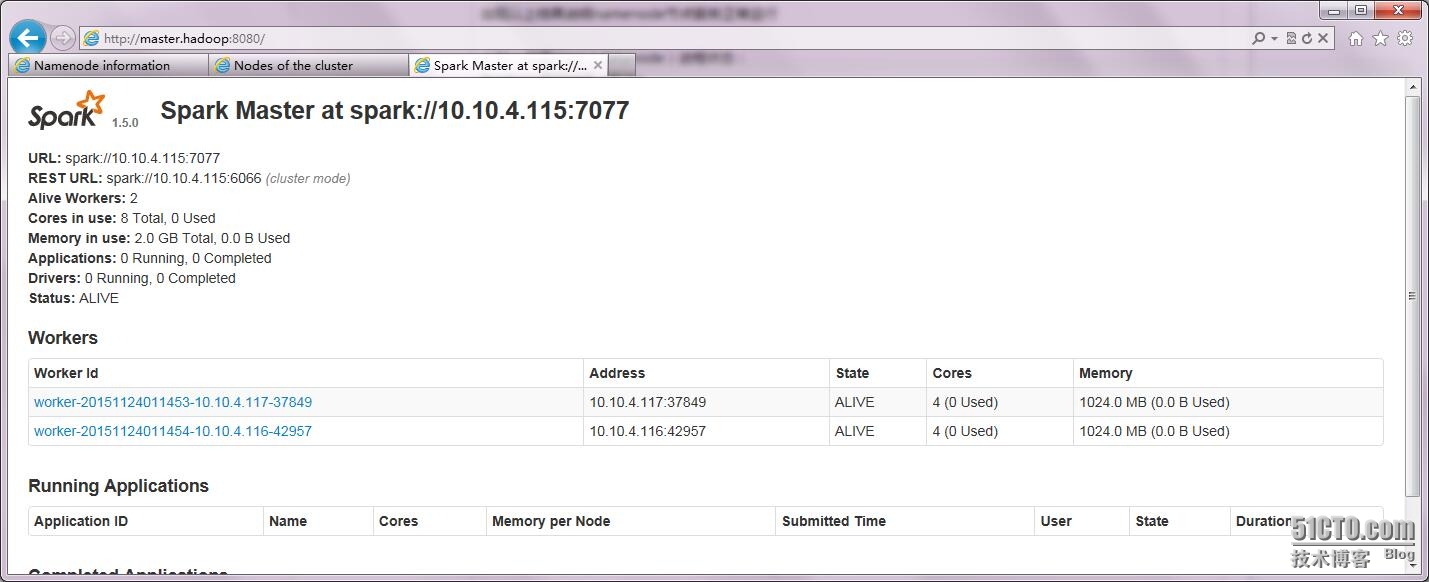

也可以通過網頁監控到相關信息:

http://master.hadoop:8080

至此,整個Hdoop、Hive、Spark集羣環境部署完成。