一、背景介紹

在使用純手工維護yaml文件方式完成內網開發和兩套測試環境和現網生成環境的核心微服務pod化之後。發現主要痛點如下:

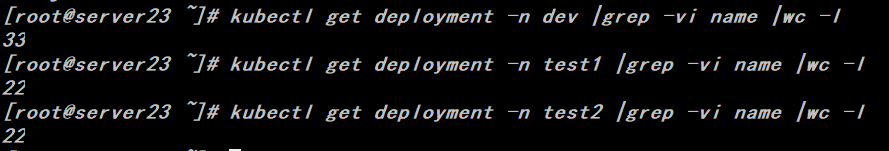

1、工作負載相關的yaml文件維護量巨大,且易出錯。(目前內網共有77個工作負載)2、研發人員對工作負載配置改動的需求比較頻繁,例如修改jvm相關參數,增加initcontainer、修改liveness、readiness探針、親和性與反親和性配置等,這類的配置嚴重同質化。

3、每個namespace都存在環境變量、configmap、rbac、pv\pvc解耦類的配置,如果對應的配置未提前創建,則後續創建的工作負載無法正常工作。

隨着第二階段各平臺模塊的微服務化改造工作的推進,預計每個namespace會分別增加30-40個工作負載,因此需要維護的yaml文件將急劇擴展,手工維護已不現實。因此使用helm來維護k8s應用被提上議事日程。

關於helm的配置文件語法及服務端配置請參考官網手冊:https://helm.sh/docs/

二、需求分析

1、公共配置類

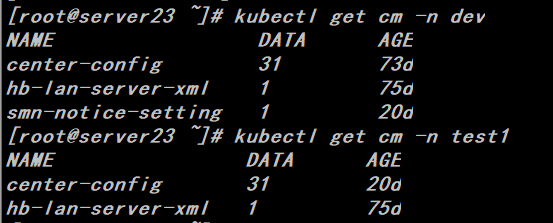

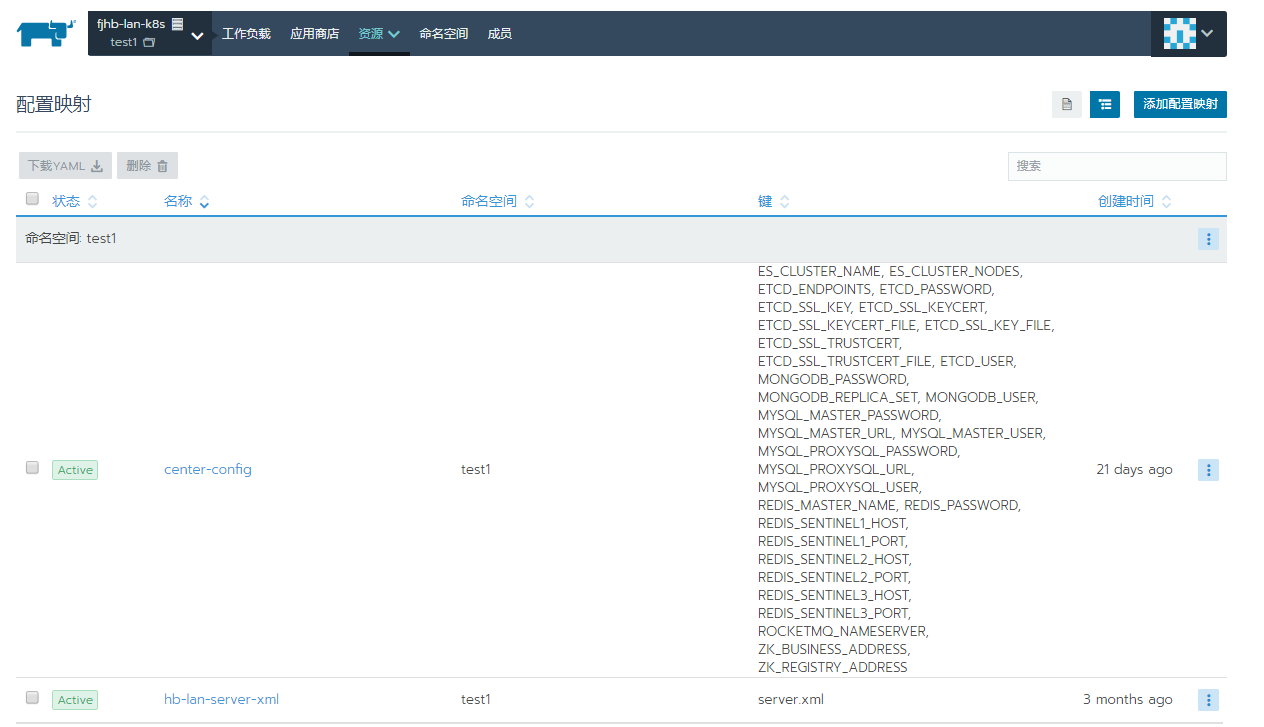

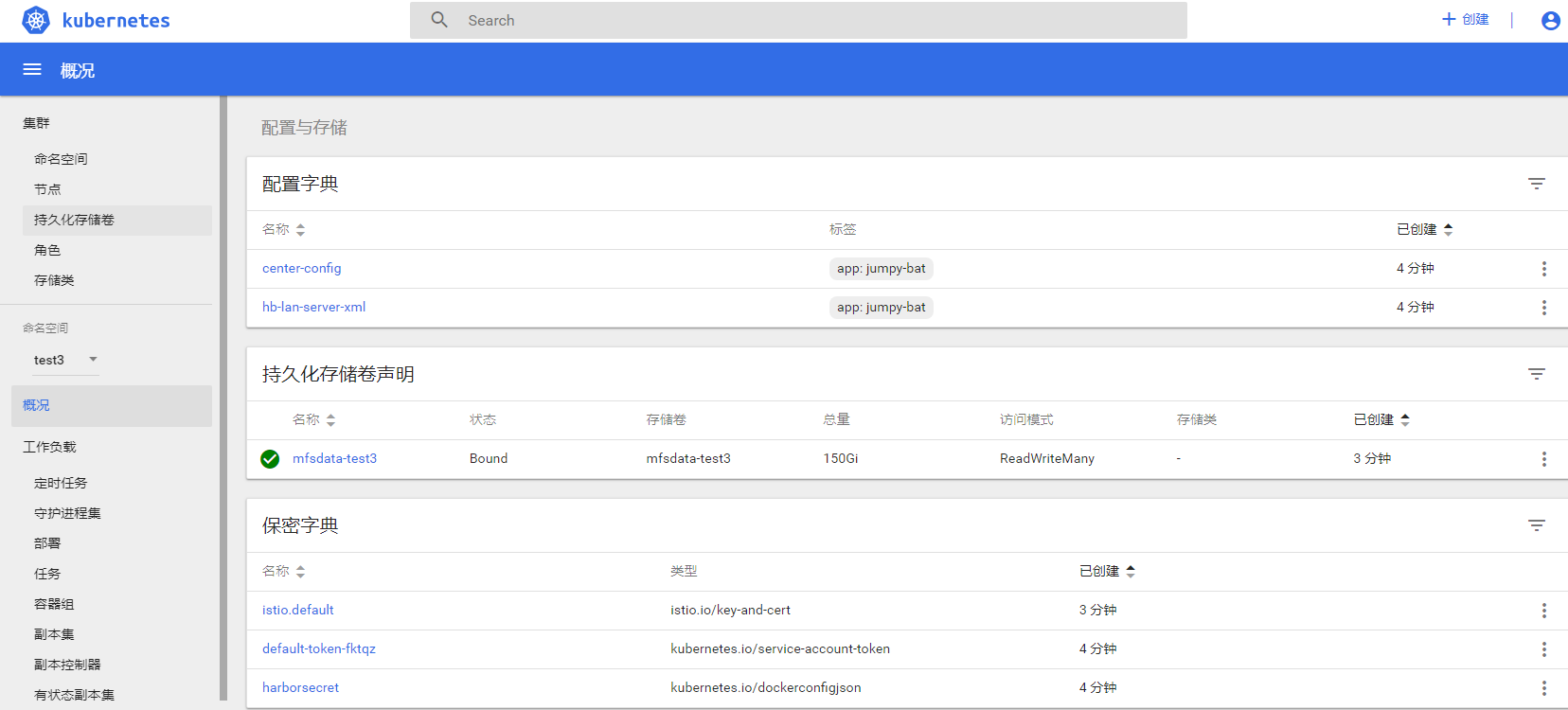

Configmap

每個namespace至少有兩個configmap,其中center-config存儲了各個環境的mysql、mongodb、redis等基礎公共服務的IP、用戶名和密碼、連接池配置信息等,我們通過集中配置解耦做到代碼編譯一次鏡像,各個環境都能運行。

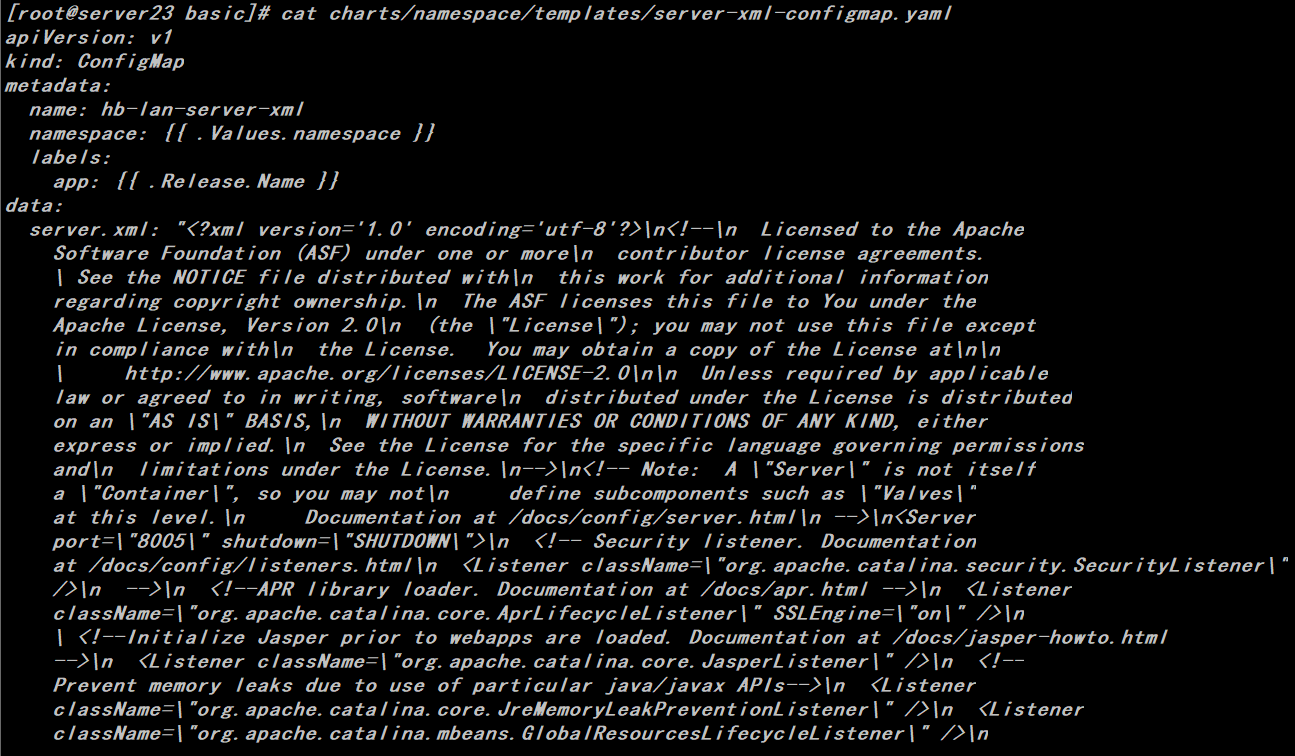

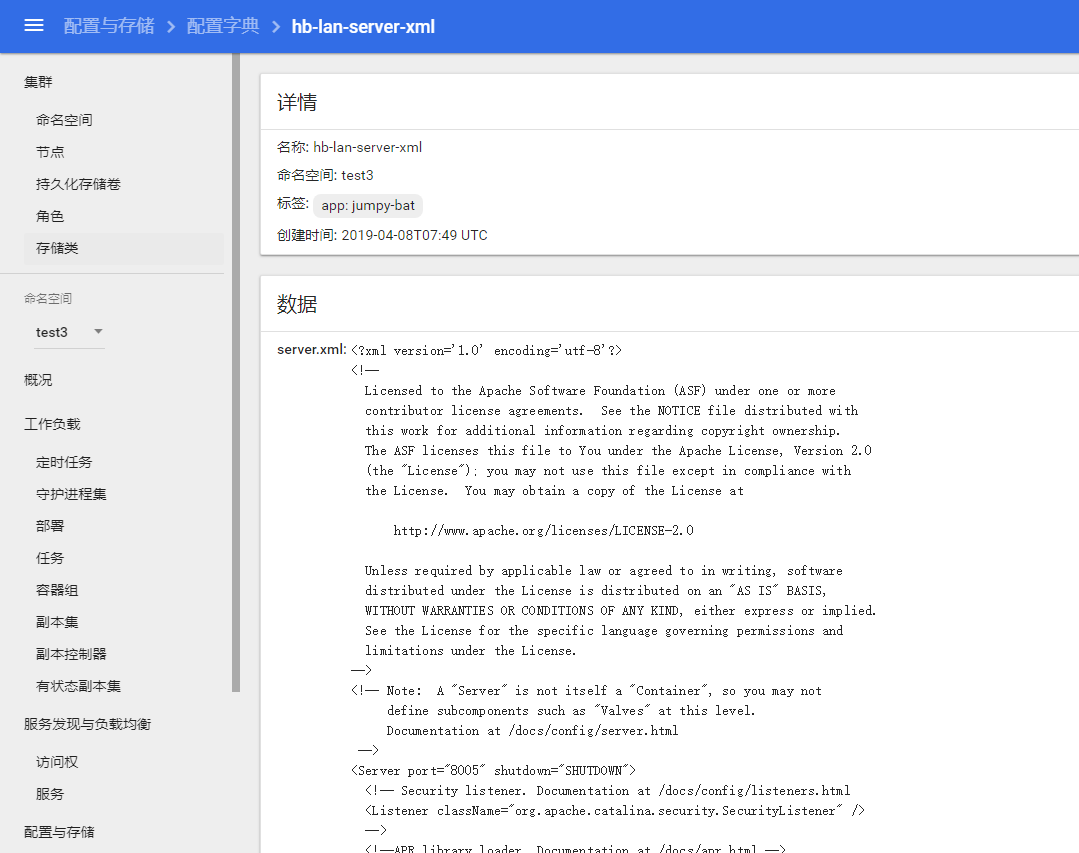

hb-lan-server-xml文件實際上就是tomcat下面的server.xml文件,由於早前代碼上使用redis做登陸session,需要修改server.xml,因此需要對這個文件做解耦配置,後續創建的工作負載通過外掛的形式替換鏡像層中的server.xml文件。

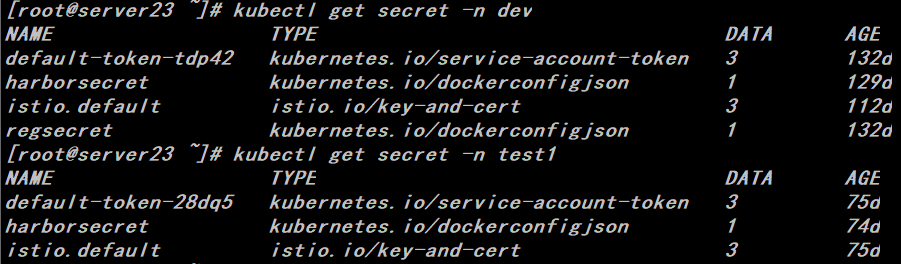

Secret

每個namespace都會存在harborsecret的token,顧名思義就是harbor倉庫拉取鏡像使用的權限信息,否則創建工作負載的時候會無法拉取鏡像。

pv\pvc

每個namespace都有一個配套共享存儲,用來統一存放用戶上傳的附件資源,內網環境我們通過nfs來實現。

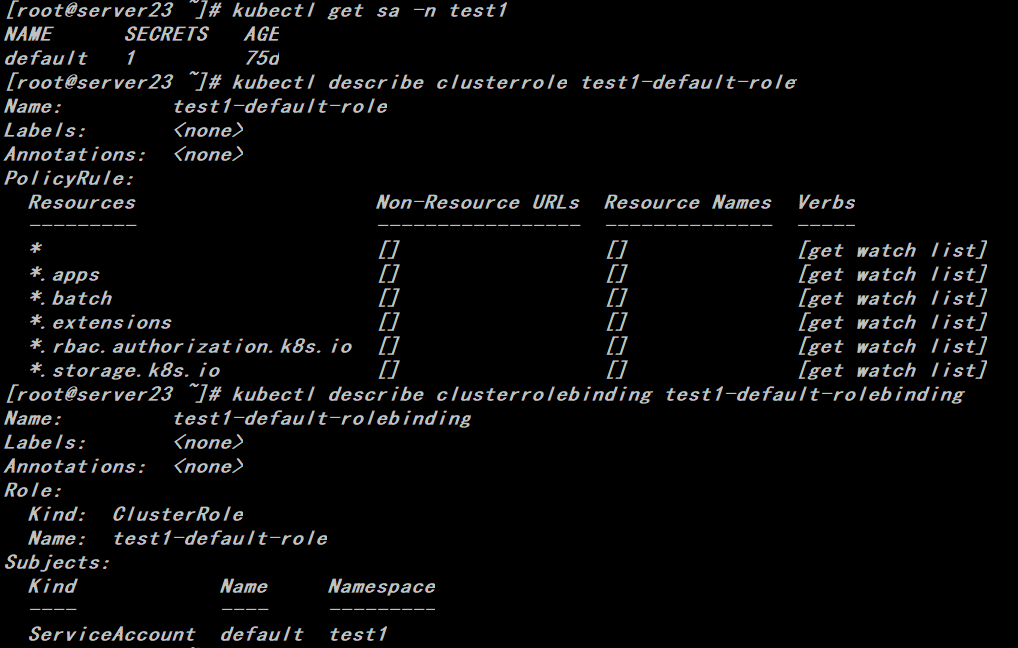

rbac相關

因爲應用程序會通過curl k8s的master來獲取一些配置類的信息,如果沒有做相應的rbac授權,訪問會出現401,因此需要對每個namespace下的default sa用戶做rbac授權。

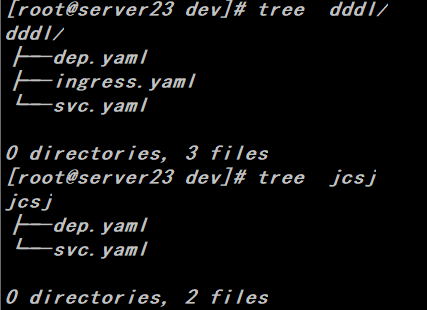

2、工作負載類

工作負載目前統一爲無狀態工作負載,主要分爲兩類,一類需要對外暴露域名和端口,另一類程序內部通過zk進行dubbo調用

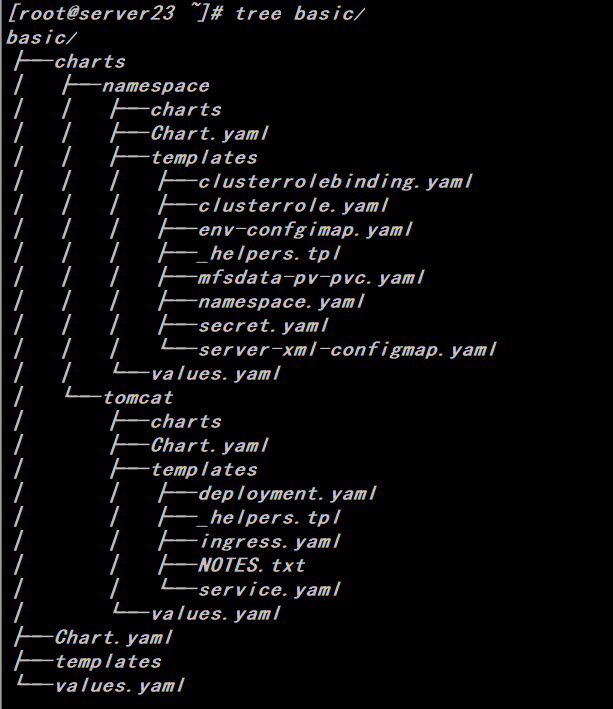

三、配置helm與測試

1、公共配置類

# helm create basic

# cd basic

# helm create namespace

# rm -rf charts/namespace/templates/*# cat charts/namespace/values.yaml

# Default values for namespace.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

namespace: default# cat charts/namespace/templates/namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: {{ .Values.namespace }}# cat charts/namespace/templates/env-confgimap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: center-config

namespace: {{ .Values.namespace }}

labels:

app: {{ .Release.Name }}

data:

ES_CLUSTER_NAME: hbjy6_dev

ES_CLUSTER_NODES: 192.168.1.19:9500

ETCD_ENDPOINTS: https://192.168.1.11:2379,https://192.168.1.17:2379,https://192.168.1.23:2379

ETCD_PASSWORD: ""

ETCD_SSL_KEY: ""

ETCD_SSL_KEY_FILE: /mnt/mfs/private/etcd/client-key.pem

ETCD_SSL_KEYCERT: ""

ETCD_SSL_KEYCERT_FILE: /mnt/mfs/private/etcd/client.pem

ETCD_SSL_TRUSTCERT: ""

ETCD_SSL_TRUSTCERT_FILE: /mnt/mfs/private/etcd/ca.pem

ETCD_USER: ""

MONGODB_PASSWORD: xxxx

MONGODB_REPLICA_SET: 192.168.1.21:37017,192.168.1.15:57017,192.168.1.16:57017

MONGODB_USER: ROOT

MYSQL_MASTER_PASSWORD: "xxxxx"

MYSQL_MASTER_URL: 192.168.1.20:3306

MYSQL_MASTER_USER: root

MYSQL_PROXYSQL_PASSWORD: "xxxxx"

MYSQL_PROXYSQL_URL: 192.168.1.20:1234

MYSQL_PROXYSQL_USER: root

REDIS_MASTER_NAME: sigma-server1

REDIS_PASSWORD: "xxxxx"

REDIS_SENTINEL1_HOST: 192.168.1.20

REDIS_SENTINEL1_PORT: "26379"

REDIS_SENTINEL2_HOST: 192.168.1.21

REDIS_SENTINEL2_PORT: "26379"

REDIS_SENTINEL3_HOST: 192.168.1.22

REDIS_SENTINEL3_PORT: "26379"

ROCKETMQ_NAMESERVER: 192.168.1.20:9876

ZK_BUSINESS_ADDRESS: 192.168.1.20:2181,192.168.1.21:2181,192.168.1.22:2181

ZK_REGISTRY_ADDRESS: 192.168.1.20:2181,192.168.1.21:2181,192.168.1.22:2181# cat charts/namespace/templates/secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: harborsecret

namespace: {{ .Values.namespace }}

type: kubernetes.io/dockerconfigjson

data:

.dockerconfigjson:eyJhdXRocyI6eyJoYXJib3IuNTlpZWR1LmNvbSI6eyJ1c2VybmFtZSI6ImFkbWluIiwicGFzc3dvcmQiOiJIYXJib3IxMjM0NSIsImF1dGgiOiJZV1J0YVc0NlNHRnlZbTl5TVRJek5EVT0ifX19# cat charts/namespace/templates/mfsdata-pv-pvc.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: mfsdata-{{ .Values.namespace }}

spec:

capacity:

storage: 150Gi

accessModes:

- ReadWriteMany

nfs:

path: /mnt/mfs

server: 192.168.1.20

persistentVolumeReclaimPolicy: Retain

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: mfsdata-{{ .Values.namespace }}

namespace: {{ .Values.namespace }}

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 150Gi# cat charts/namespace/templates/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: {{ .Release.Name }}-{{ .Values.namespace }}-role

labels:

app: {{ .Release.Name }}

rules:

- apiGroups: [""]

resources: ["*"]

verbs: ["get","watch","list" ]

- apiGroups: ["storage.k8s.io"]

resources: ["*"]

verbs: ["get","watch","list" ]

- apiGroups: ["rbac.authorization.k8s.io"]

resources: ["*"]

verbs: ["get","watch","list" ]

- apiGroups: ["batch"]

resources: ["*"]

verbs: ["get","watch","list" ]

- apiGroups: ["apps"]

resources: ["*"]

verbs: ["get","watch","list" ]

- apiGroups: ["extensions"]

resources: ["*"]

verbs: ["get","watch","list" ]# cat charts/namespace/templates/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: {{ .Release.Name }}-{{ .Values.namespace }}-binding

labels:

app: {{ .Release.Name }}

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: {{ .Release.Name }}-{{ .Values.namespace }}-role

subjects:

- kind: ServiceAccount

name: default

namespace: {{ .Values.namespace }}2、工作負載類

# cd basic

# helm create tomcat

# rm -rf charts/namespace/tomcat/*# cat charts/tomcat/values.yaml

# Default values for tomcat.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

replicaCount: 1

version: v1

mfsdata: mfsdata

env:

-server -Xms1024M -Xmx1024M -XX:MaxMetaspaceSize=320m -XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/home/tomcat/jvmdump/

-Duser.timezone=Asia/Shanghai

-Drocketmq.client.logRoot=/home/tomcat/logs/rocketmqlog

image: repository: harbor.59iedu.com

pullPolicy: Always

service:

type: ClusterIP

port: 8080

dubboport: 20880

ingress:

enabled: false

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

path: /

hosts:

- www.test1.com

- www.test2.com

resources:

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

limits:

cpu: 200m

memory: 0.2Gi

requests:

cpu: 100m

memory: 0.1Gi

nodeSelector: {}

tolerations:

- key: node.kubernetes.io/not-ready

operator: Exists

effect: NoExecute

tolerationSeconds: 300

- key: node.kubernetes.io/unreachable

operator: Exists

effect: NoExecute

tolerationSeconds: 300

affinity:

schedulerName: default-scheduler# cat charts/tomcat/templates/deployment.yaml

{{- $releaseName := .Release.Name -}}

apiVersion: apps/v1beta2

kind: Deployment

metadata:

name: {{ .Release.Name }}

namespace: {{ .Values.namespace }}

labels:

app: {{ .Values.namespace }}

version: {{ .Values.version }}

spec:

replicas: {{ .Values.replicaCount }}

selector:

matchLabels:

app: {{ .Release.Name }}

version: {{ .Values.version }}

template:

metadata:

labels:

app: {{ .Release.Name }}

version: {{ .Values.version }}

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 25%

maxSurge: 25%

revisionHistoryLimit: 10

progressDeadlineSeconds: 600

spec:

volumes:

- name: hb-lan-server-xml

configMap:

name: hb-lan-server-xml

items:

- key: server.xml

path: server.xml

- name: vol-localtime

hostPath:

path: /etc/localtime

type: ''

- name: mfsdata

persistentVolumeClaim:

claimName: {{ .Values.mfsdata }}

- name: pp-agent

emptyDir: {}

imagePullSecrets:

- name: harborsecret

initContainers:

- name: init-pinpoint

image: 'harbor.59iedu.com/fjhb/pp_agent:latest'

command:

- sh

- '-c'

- cp -rp /var/lib/pp_agent/* /var/init/pinpoint

resources: {}

volumeMounts:

- name: pp-agent

mountPath: /var/init/pinpoint

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

imagePullPolicy: Always

containers:

- name: {{ .Release.Name }}

image: {{ .Values.image }}

imagePullPolicy: {{ .Values.pullPolicy }}

terminationGracePeriodSeconds: 30

dnsPolicy: ClusterFirst

securityContext: {}

lifecycle:

preStop:

exec:

command: ["/bin/bash", "-c", "PID=`pidof java` && kill -SIGTERM $PID && while ps -p $PID > /dev/null; do sleep 1; done;"]

envFrom:

- configMapRef:

name: center-config

env:

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: CATALINA_OPTS

value: >-

-javaagent:/var/init/pinpoint/pinpoint-bootstrap.jar

-Dpinpoint.agentId=${POD_IP}

-Dpinpoint.applicationName=test1-{{ .Release.Name }}

- name: JAVA_OPTS

value: >-

{{ .Values.env }}

ports:

- name: http

containerPort: {{ .Values.service.port }}

protocol: TCP

livenessProbe:

tcpSocket:

port: {{ .Values.service.port }}

initialDelaySeconds: 60

timeoutSeconds: 2

periodSeconds: 10

successThreshold: 1

failureThreshold: 3

readinessProbe:

tcpSocket:

port: {{ .Values.service.dubboport }}

initialDelaySeconds: 120

timeoutSeconds: 3

periodSeconds: 10

successThreshold: 1

failureThreshold: 3

volumeMounts:

- name: hb-lan-server-xml

mountPath: /home/tomcat/conf/server.xml

subPath: server.xml

- name: vol-localtime

readOnly: true

mountPath: /etc/localtime

- name: mfsdata

mountPath: /mnt/mfs

- name: pp-agent

mountPath: /var/init/pinpoint

resources:

{{ toYaml .Values.resources | indent 12 }}

{{- with .Values.nodeSelector }}

nodeSelector:

{{ toYaml . | indent 8 }}

{{- end }}

{{- with .Values.affinity }}

affinity:

podAntiAffinity:

PreferredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- {{ $releaseName }}

topologyKey: "kubernetes.io/hostname"

{{ toYaml . | indent 8 }}

{{- end }}

{{- with .Values.tolerations }}

tolerations:

{{ toYaml . | indent 8 }}

{{- end }}# cat charts/tomcat/templates/ingress.yaml

{{- if .Values.ingress.enabled -}}

{{- $servicePort := .Values.service.port -}}

{{- $ingressPath := .Values.ingress.path -}}

{{- $releaseName := .Release.Name -}}

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: {{ .Release.Name }}

namespace: {{ .Values.namespace }}

labels:

app: {{ .Release.Name }}

version: {{ .Values.version }}

{{- with .Values.ingress.annotations }}

annotations:

{{ toYaml . | indent 4 }}

{{- end }}

spec:

{{- if .Values.ingress.tls }}

tls:

{{- range .Values.ingress.tls }}

- hosts:

{{- range .hosts }}

- {{ . }}

{{- end }}

secretName: {{ .secretName }}

{{- end }}

{{- end }}

rules:

{{- range .Values.ingress.hosts }}

- host: {{ . }}

http:

paths:

- path: {{ $ingressPath }}

backend:

serviceName: {{ $releaseName }}

servicePort: {{ $servicePort }}

{{- end }}

{{- end }}# cat charts/tomcat/templates/service.yaml

apiVersion: v1

kind: Service

metadata:

name: {{ .Release.Name }}

namespace: {{ .Values.namespace }}

labels:

app: {{ .Release.Name }}

version: {{ .Values.version }}

spec:

type: {{ .Values.service.type }}

ports:

- port: {{ .Values.service.port }}

targetPort: http

protocol: TCP

name: http

selector:

app: {{ .Release.Name }}

version: {{ .Values.version }}

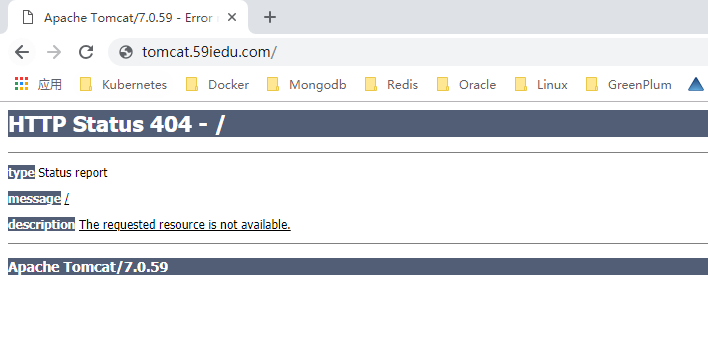

3、運行測試

# helm install --debug --dry-run /root/basic/charts/namespace/ \

--set namespace=test3

# helm install --debug --dry-run /root/basic/charts/tomcat/ \

--name tomcat-test \

--set namespace=test3 \

--set mfsdata=mfsdata-test3 \

--set replicaCount=2 \

--set image=harbor.59iedu.com/dev/tomcat_base:v1.1-20181127 \

--set ingress.enabled=true \

--set ingress.hosts={tomcat.59iedu.com} \

--set resources.limits.cpu=2000m \

--set resources.limits.memory=2Gi \

--set resources.requests.cpu=500m \

--set resources.requests.memory=1Gi \

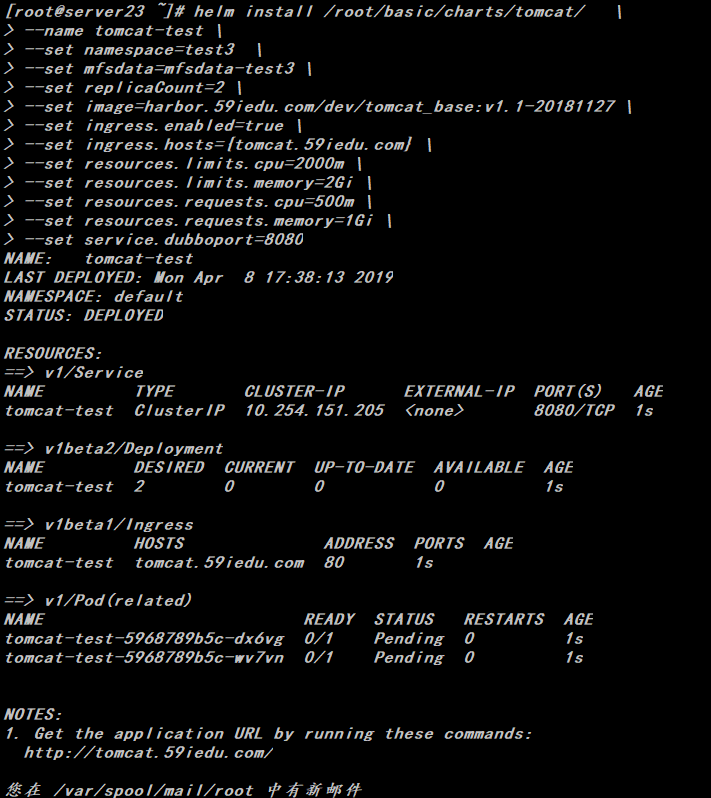

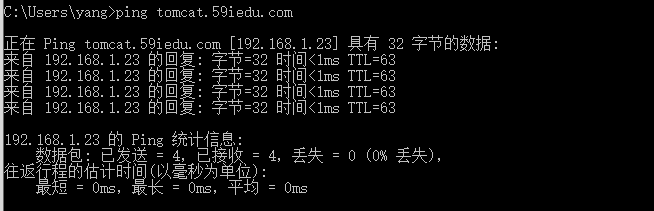

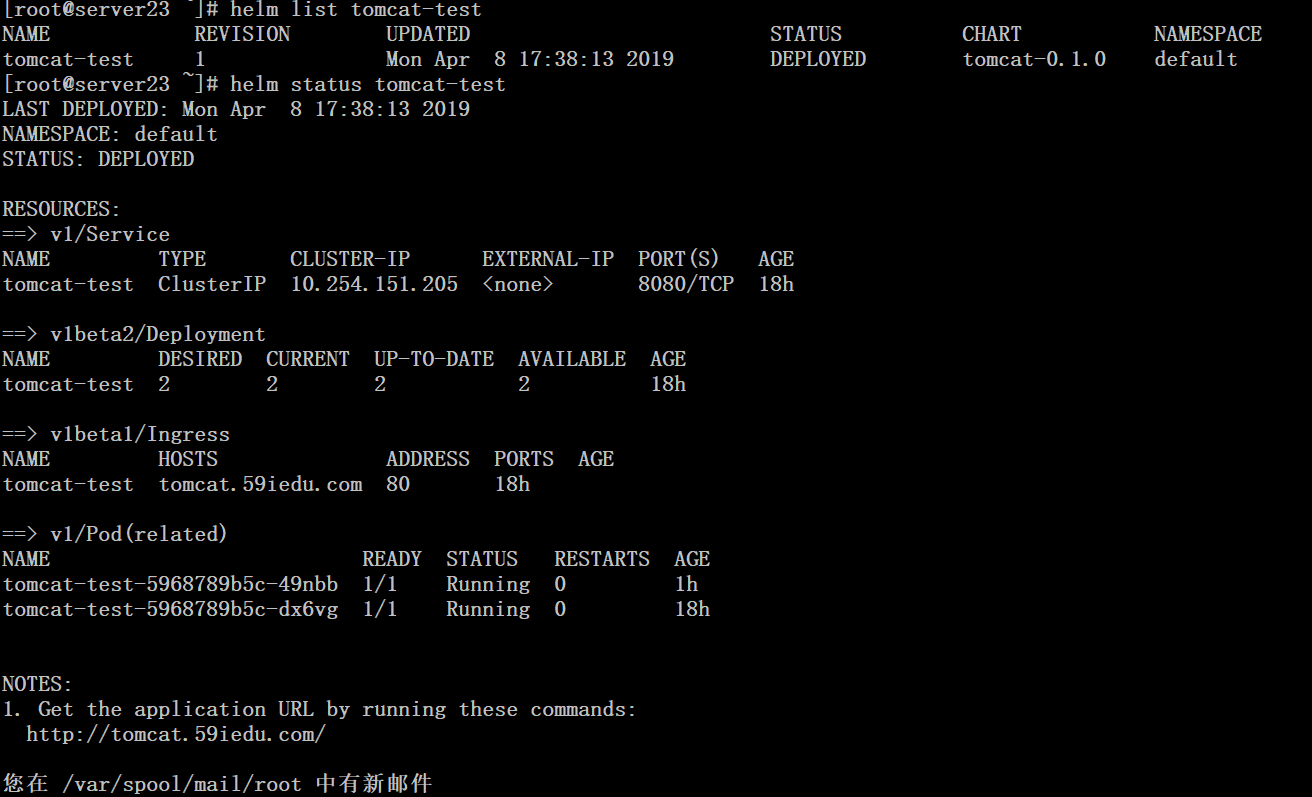

--set service.dubboport=80804、創建release

# helm install /root/basic/charts/namespace/ --set namespace=test3# helm install /root/basic/charts/tomcat/ \

--name tomcat-test \

--set namespace=test3 \

--set mfsdata=mfsdata-test3 \

--set replicaCount=2 \

--set image=harbor.59iedu.com/dev/tomcat_base:v1.1-20181127 \

--set ingress.enabled=true \

--set ingress.hosts={tomcat.59iedu.com} \

--set resources.limits.cpu=2000m \

--set resources.limits.memory=2Gi \

--set resources.requests.cpu=500m \

--set resources.requests.memory=1Gi \

--set service.dubboport=8080四、更新與回滾

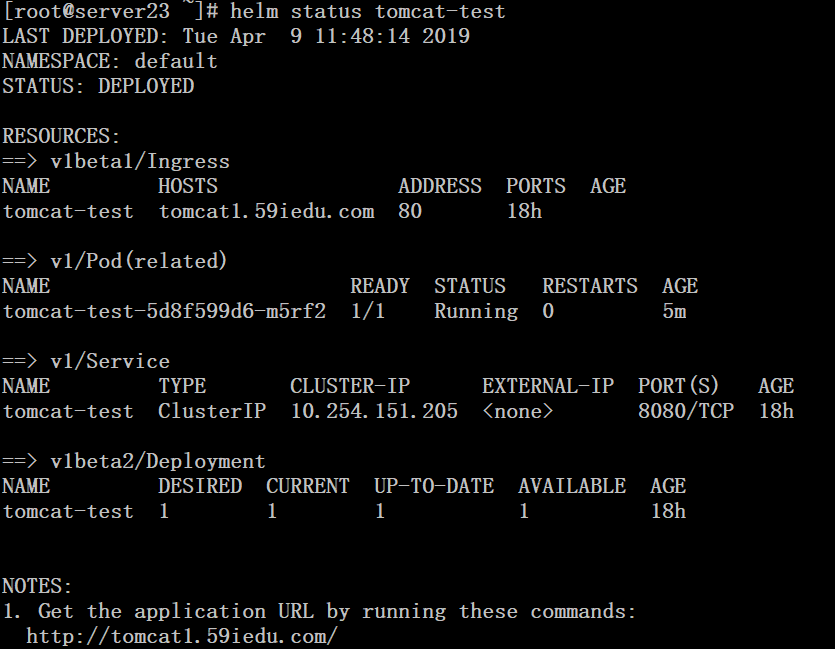

# helm upgrade --install tomcat-test \

--values /root/basic/charts/tomcat/values.yaml \

--set namespace=test3 \

--set mfsdata=mfsdata-test3 \

--set replicaCount=1 \

--set image=harbor.59iedu.com/dev/tomcat_base:v1.1-20181127 \

--set ingress.enabled=true \

--set ingress.hosts={tomcat1.59iedu.com} \

--set resources.limits.cpu=200m \

--set resources.limits.memory=1Gi \

--set resources.requests.cpu=100m \

--set resources.requests.memory=0.5Gi \

--set service.dubboport=8080 \

/root/basic/charts/tomcat

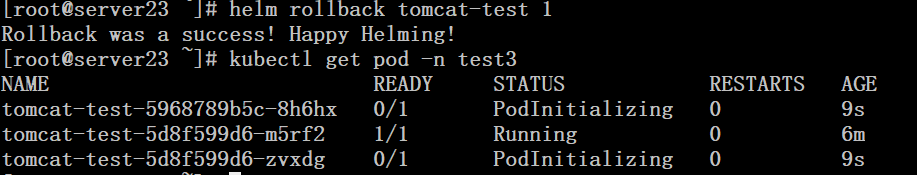

萬一更新失敗,可選擇回滾

# helm rollback tomcat-test 1

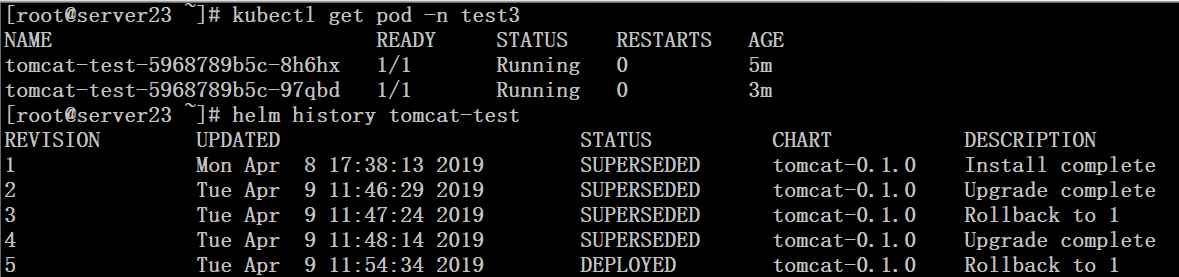

# helm history tomcat-test

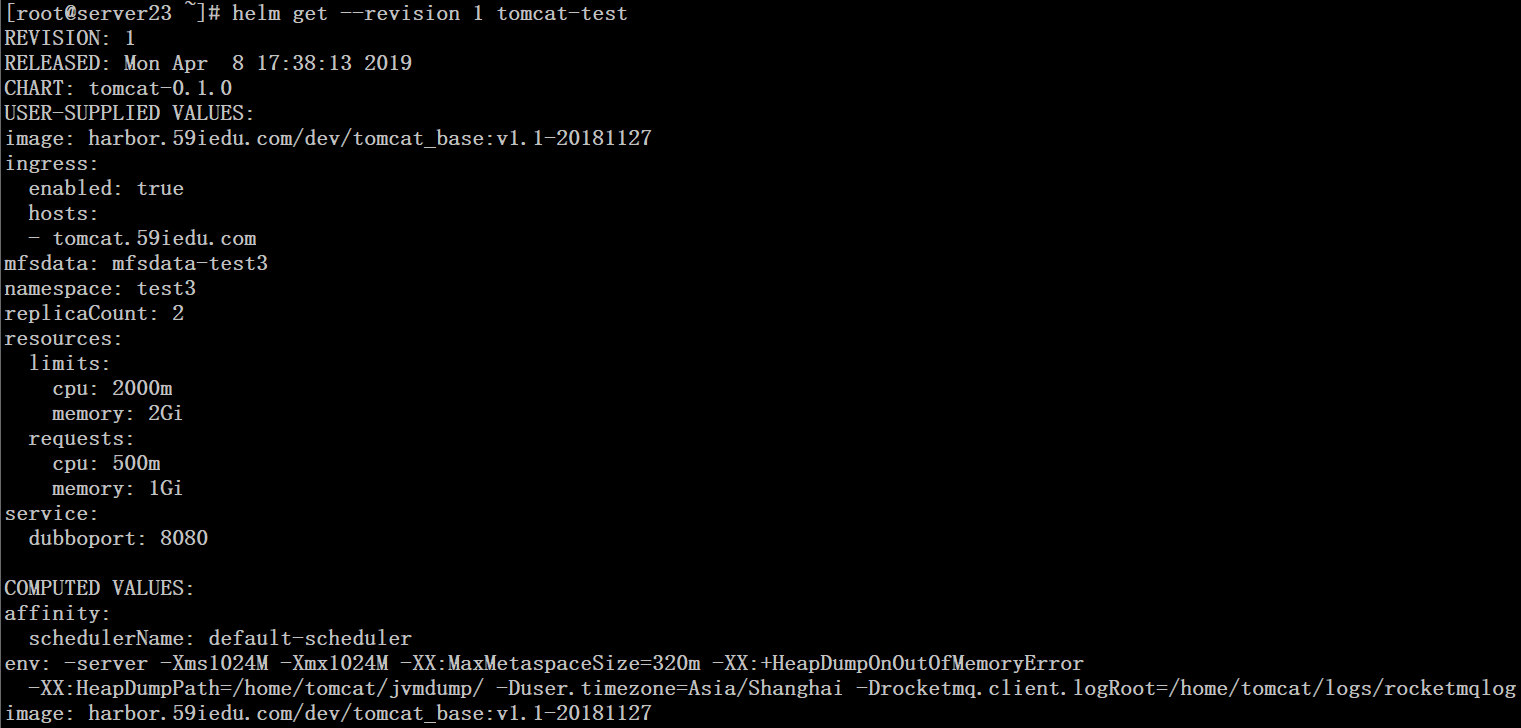

# helm get --revision 1 tomcat-test